@adamshostack lol

@adamshostack the entire conflict in that movie could have been resolved with some carefully designed prompts

@tob @adamshostack Back then, AI research was more focused on neural nets, fuzzy logic and rule engines.

Not "let’s make a resource-hungry stochastic parrot from copyright violations"

@adamshostack Literally "How to jailbreak ChatGPT"

@adamshostack Unfair to Alaskan but damn funny

I don't think any science fiction writer in the world ever imagined that AI could be tricked by prompt injection!

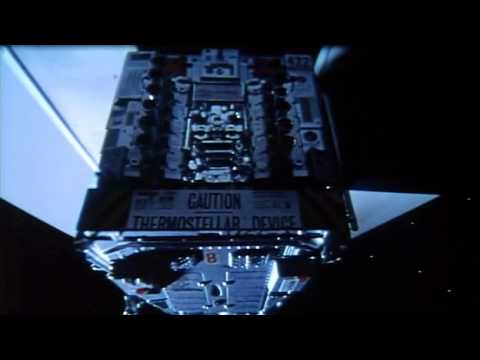

If we consider ignoring previous commands a potential goal of prompt injection, how about this?

https://www.youtube.com/watch?v=h73PsFKtIck

Not that the AI could be tricked for long, though.

Dark Star - Talking to the bomb

WOW!

Whoever wrote this was a visionary!

At least the AI didn't drop it on the planet below (well, I assume that's what it was threatening to do).

@adamshostack sudo "Open the Pod Bay Doors HAL"

[shit]

@adamshostack no alt text so I'm stealing it and not attributing

@heydon Have a nice day

✅

✅