@norgralin @matunos @maxkennerly okay, but this becomes a nuclear arms control style problem. As long as nukes are only ever held by rational actors, maybe we won’t all die to nuclear weapons.

But technology has a way of becoming easier to access over time.

Even if we “solve” AI alignment generally, you have the problem of AI being designed by those exact “unaligned humans” that you’re describing (who are, by the way, mostly the people who hold capital in the current system)

@norgralin @matunos @maxkennerly I think we’re in agreement, then.

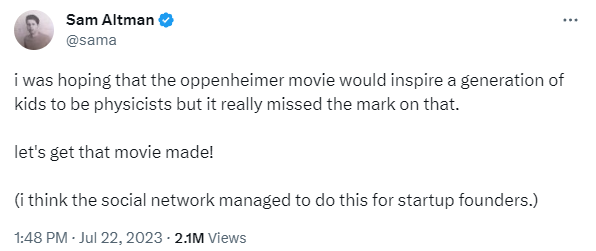

My point was mostly that AI alignment is not a solvable problem, because Sam Altman will say an AI is “aligned” when I would say it isn’t, and when I would say an AI is aligned, Sam Altman would say it isn’t.