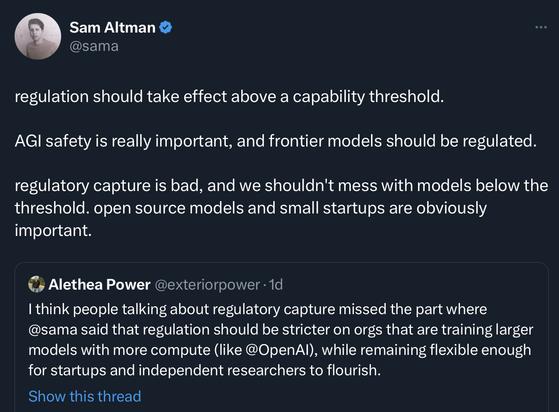

Altman would rather talk about regulation of sci-fi scenarios and not about the real world consequences of how AI can be used right now to do harm. Things like protection from discrimination, fairness, privacy, ability to receive remedy.

@Riedl That last paragraph of Sam Altman's is a really good example of this thing that he and many other SV types do, where they take something (here 'regulatory capture') that is gaining public notice and a growing consensus on valence, where they view it with the opposite valence and find that they can't flip the consensus to match their view, so they try to redirect it to mean something completely different where the valence helps them.

@Riedl Eg in the example above (and elsewhere) they are trying to get people to use 'regulatory capture' to mean 'capture of companies/innovation by those dastardly regulators', while keeping the high negative valence consensus that has formed about 'regulatory capture', thus neatly changing how people's feeling will translate to public pressure from something that threatens the SV owning class to something that benefits the SV owning class.