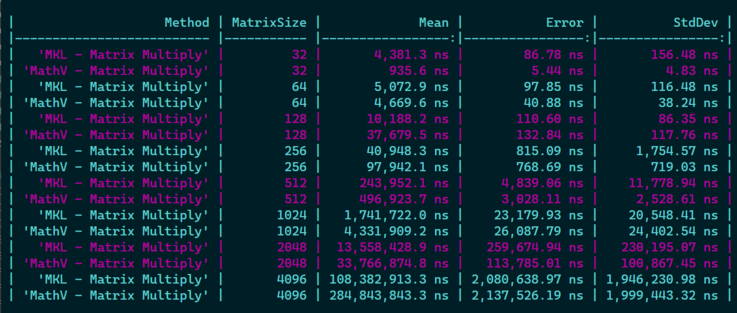

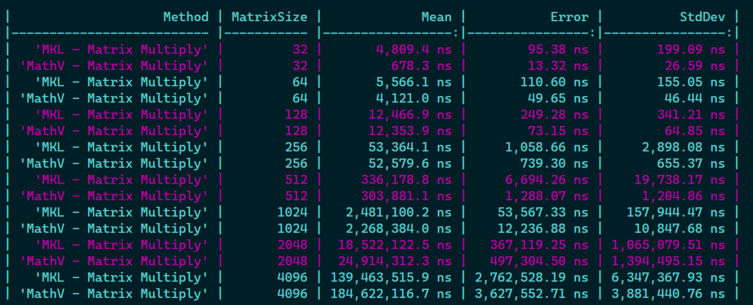

Not that bad for a first try, but I will have to dig further if 1) I can optimize things further with some fancy AVX2 instructions, 2) If I can improve cache locality usage when going //

Not that bad for a first try, but I will have to dig further if 1) I can optimize things further with some fancy AVX2 instructions, 2) If I can improve cache locality usage when going //

First time I have to make an algorithm that takes into account such things, that's pretty interesting and impressive how much it can change the results! 🏎️

@xoofx That’s awesome 😀 And you sent me a down a rabbit hole as a performance newbie (but user of MKL and IPP) Without tiling and multi-threading I managed to get 6.5x MKL’s perf on a 500x500 matrix. I read about a technique called register blocking https://gist.github.com/nadavrot/5b35d44e8ba3dd718e595e40184d03f0#register-blocking and it said by doing 3x4 blocks we can use all 16 SIMD registers (3 reused in inner loop, 1 to loop over and 3*4 accumulators = 16). That’s what performs best so far but when I look at the disassembly it doesn’t seem to re-use registers as suggested

https://sharplab.io/#gist:67fcbace16c33703b6a6c5c3b59a58f2

Is it possible to make it re-use the registers like in the gist? Wonder whether you used a similar technique

Though, getting 6x over MKL seems really suspicious 😉 for the reason that the code at the asm level cannot be really optimized more than MKL, so I would double check (e.g the correctness of the results, which version of MKL is used, how it is configured...etc.)

@xoofx I tried without the struct with 12 single Vector256 variables but then gave up since I got the same disasm back 😕 but it sounds like I need to experiment more after your answer maybe I just placed them badly

Oh and I’m so sorry for the misunderstanding. I have 6 times worse perf than MKL of course 😃 (~3 now with 2 threads). I actually wanted to sound humble instead of bragging.

Thanks very much for your answer

@neilhenning Yeah, but I have not seen anybody using them for practical BLAS implementations (and unlikely MKL), as they come with lots of constraints that are not suitable for e.g SIMD register pressure.

I really don't know what they are doing. It is quite sad also that it is a closed source library as we can't replicate/learn from it.

@xoofx If you have a sampling profiler, it'll probably point you to the main loop in the binary that you're interested in pretty quickly. There's at least a chance it's written in assembly, so you might not be missing much? Not sure, but that's how I'd try to learn from it.

(And IANAL, but I suspect "replicating" is a grey area, regardless of whether or not it's open source.)

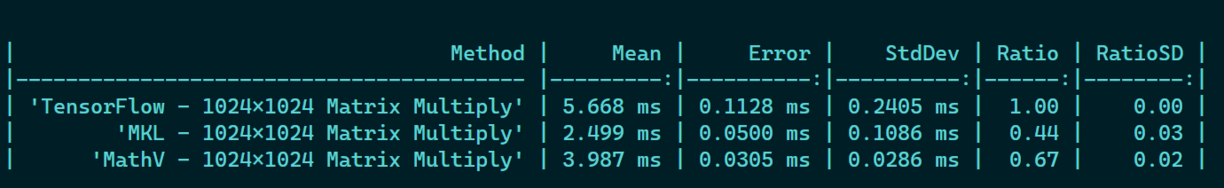

@nietras I think they are still using an optimized version (SIMD + MT) otherwise they wouldn't even be able to go that down, but... for some reasons, my version is doing a good job! 😅

Difficult to beat MKL though. I'm gonna keep my current version and move on. Don't want to spend too much time on something that has been optimized for years by others...

@xoofx definitely good job. You might have looked at ML.NET code too, but not sure how optimized it really is.

machinelearning/CpuMathUtils.netcoreapp.cs at 04dda55ab0902982b16309c8e151f13a53e9366d · dotnet/machinelearning

ML.NET is an open source and cross-platform machine learning framework for .NET. - machinelearning/CpuMathUtils.netcoreapp.cs at 04dda55ab0902982b16309c8e151f13a53e9366d · dotnet/machinelearning

@xoofx maybe compare with BLAS too?

Where does accuracy and precision fall vs speed? (Compare: a function that just immediately returns is a very fast matrix multiplication but neither accurate nor precise.)