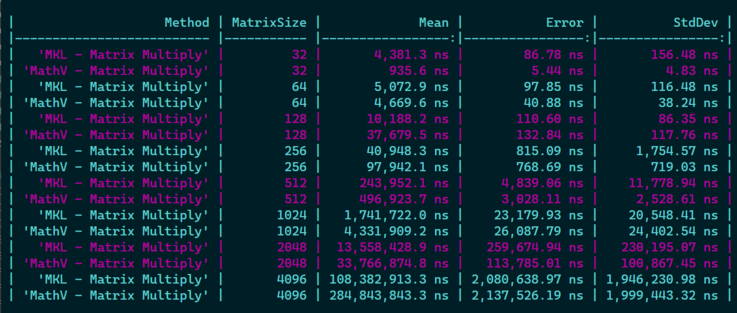

Not that bad for a first try, but I will have to dig further if 1) I can optimize things further with some fancy AVX2 instructions, 2) If I can improve cache locality usage when going //

Not that bad for a first try, but I will have to dig further if 1) I can optimize things further with some fancy AVX2 instructions, 2) If I can improve cache locality usage when going //

First time I have to make an algorithm that takes into account such things, that's pretty interesting and impressive how much it can change the results! 🏎️

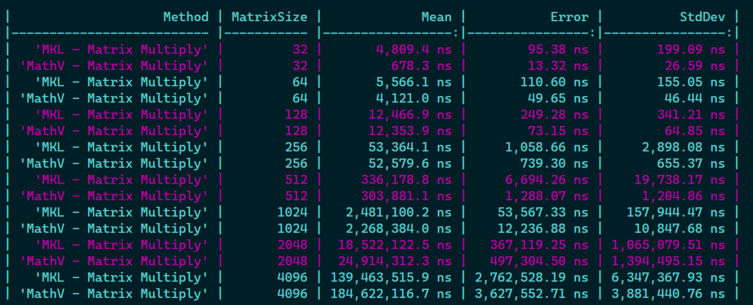

@xoofx That’s awesome 😀 And you sent me a down a rabbit hole as a performance newbie (but user of MKL and IPP) Without tiling and multi-threading I managed to get 6.5x MKL’s perf on a 500x500 matrix. I read about a technique called register blocking https://gist.github.com/nadavrot/5b35d44e8ba3dd718e595e40184d03f0#register-blocking and it said by doing 3x4 blocks we can use all 16 SIMD registers (3 reused in inner loop, 1 to loop over and 3*4 accumulators = 16). That’s what performs best so far but when I look at the disassembly it doesn’t seem to re-use registers as suggested

https://sharplab.io/#gist:67fcbace16c33703b6a6c5c3b59a58f2

Is it possible to make it re-use the registers like in the gist? Wonder whether you used a similar technique

Though, getting 6x over MKL seems really suspicious 😉 for the reason that the code at the asm level cannot be really optimized more than MKL, so I would double check (e.g the correctness of the results, which version of MKL is used, how it is configured...etc.)

@xoofx I tried without the struct with 12 single Vector256 variables but then gave up since I got the same disasm back 😕 but it sounds like I need to experiment more after your answer maybe I just placed them badly

Oh and I’m so sorry for the misunderstanding. I have 6 times worse perf than MKL of course 😃 (~3 now with 2 threads). I actually wanted to sound humble instead of bragging.

Thanks very much for your answer