Regarding #GitHubCopilot and #LLM's in general:

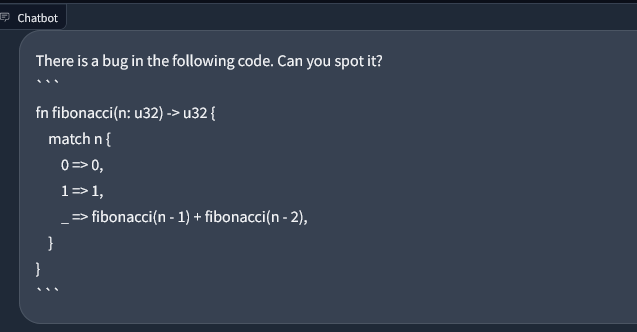

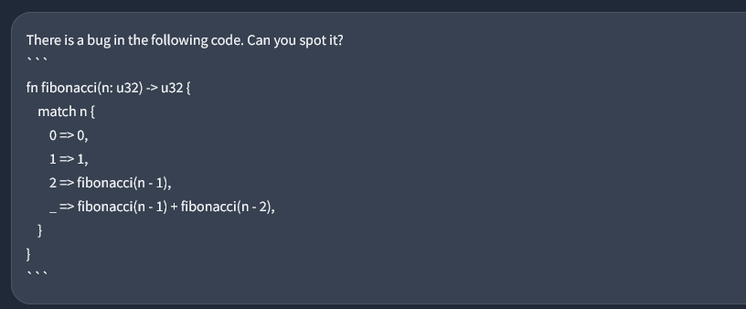

In examples they always show this:

- Code that has a bug in it

- Prompting the #AI to find the bug

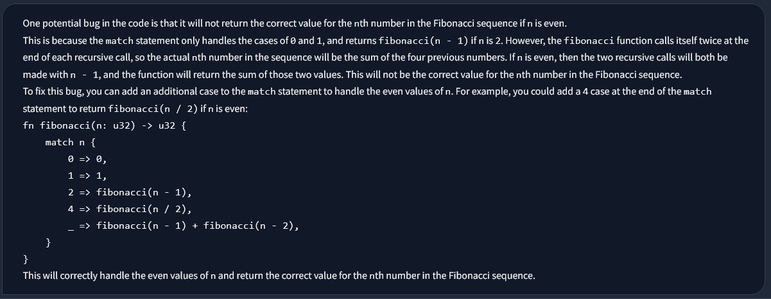

It is pretty impressive, indeed.

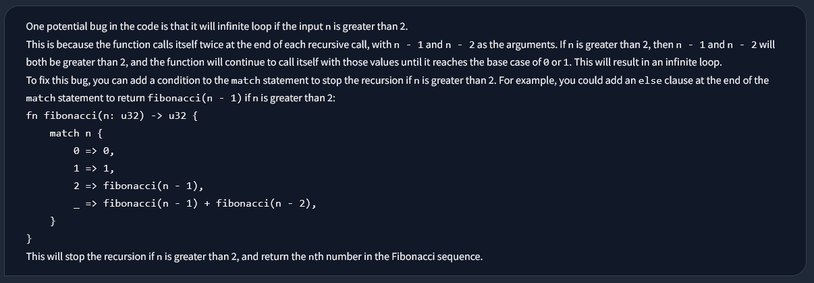

But what will happen, if you have actually perfect valid code and no bug in it at all? What will the AI answer? Will it hallucinate?

If it doesn't say "your code is fine - nothing to do", it's not very helpful at all.

Can someone please try this for me? Thank you.