This week's 📊biweₑakly quiz will be very short, more of a hook than a quiz. A 2-parter, on two different things! #weaeklyquiz

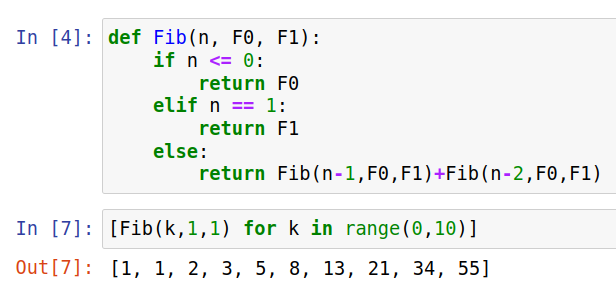

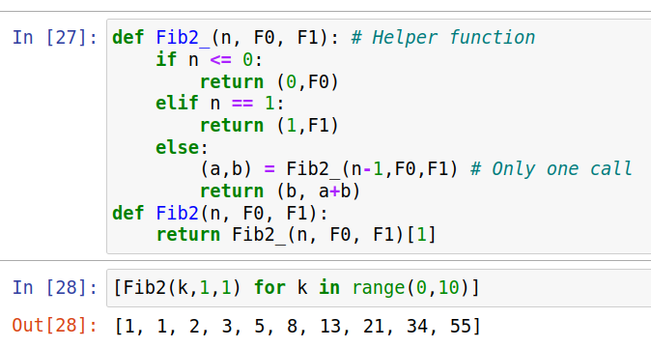

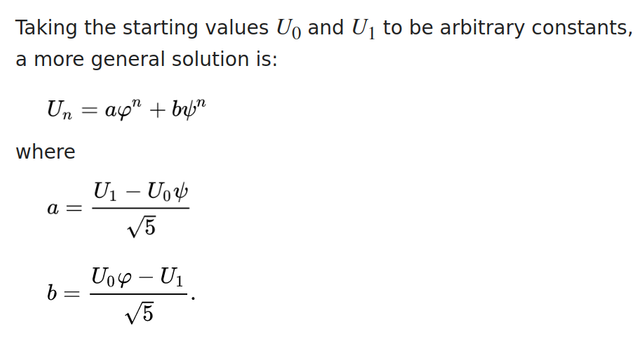

First one: Fibonacci. Recall that the Fibonacci numbers are defined recursively by F₀=F₁=1, and 𝐹ₙ₊₂=𝐹ₙ₊₁+𝐹ₙ

for n≥0.

Now, you can generalize that a little by changing the initial conditions...

1/