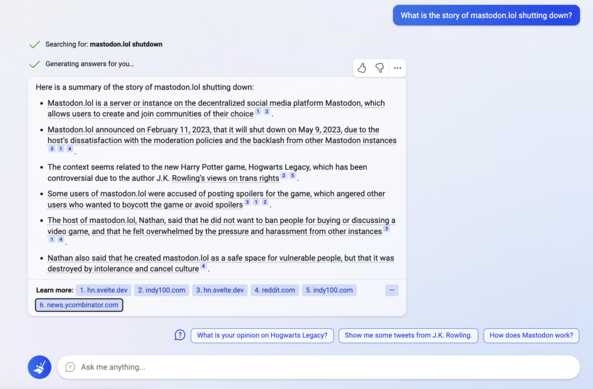

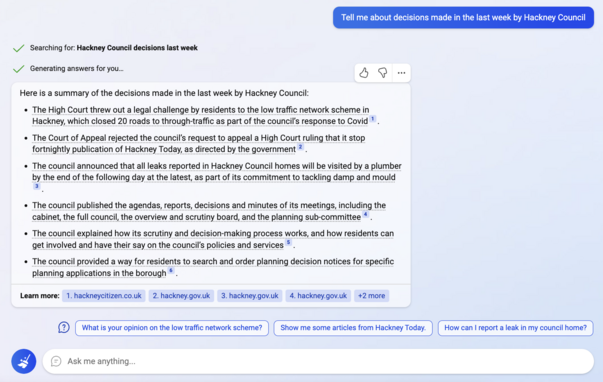

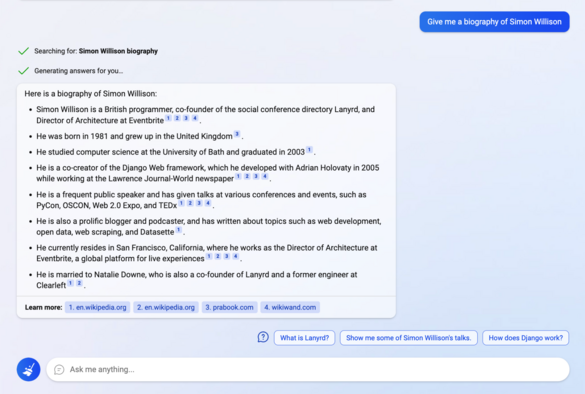

There are a LOT of screenshots of the current Bing floating around right now where it answers questions with hilariously bad answers. This is NOT the new Bing though: this is Bing's existing version of Google's "featured snippets"

The new Bing is still behind a waitlist for most people. I've attached a screenshot of that taken from this Verge article: https://www.theverge.com/2023/2/7/23587454/microsoft-bing-edge-chatgpt-ai