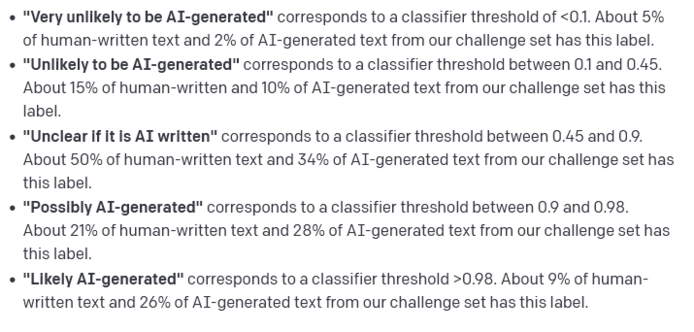

OpenAI released a tool which purports to detect AI-generated text. At the highest end of detection, it labels text "possibly" or "likely" AI-generated. 21% of human-written text falls under "possibly" and 9% of "likely" does. That's 3 in 10 students being defamed and/or harmed.

Just like other providers of academic surveillance software, OpenAI states that their detector "should not be used as a primary decision-making tool".

It will be, though. And harm will follow.

https://openai.com/blog/new-ai-classifier-for-indicating-ai-written-text/