Protip:

When designing a user interface, imagine some old woman using it, say Margaret Hamilton, and she's clicking your app's buttons and saying to you, as old people do,

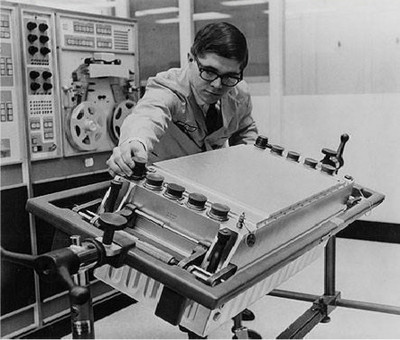

"Young whippersnapper, when I was your age, I sent 24 people to the ACTUAL MOON with my software in 4K of RAM and here I am clicking your button and it takes ten seconds to load a 50 megabyte video ad and then it crashes

I'm not even ANGRY with you, I'm just disappointed."