@JMMaok @pensato @futurebird

@jeffjarvis @jayrosen_nyu

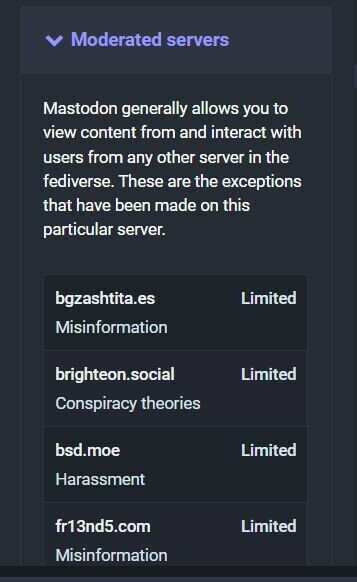

J, really good start, needs politics re known, well understood, provable unreliable sources, disinformation spreaders; need help from the Trust Project and the Journalism Trust Initiative from Reporters Without Borders