Tối ưu hóa thread pool cho webhook và async call trong Spring Boot: Hướng dẫn thực tế giúp các nhà phát triển Java khai thác tối đa backend services. Chia sẻ kinh nghiệm và feedback của bạn!

#java #springboot #threadpool #webhook #async #lậptrình #java #spring_boot

I just now finished writing a simple #pipeline structure which can take in a container of one type, and process it "#java-style #stream". It also supports adding a #threadpool, effectively parallelizing any type of data structure.

Sadly, I found out I probably will have no use of it, especially when something so generalized is probably slower than any bespoke implementation I currently have.

If anyone feels interested, I might upload it individually.

Статические DAG-графы: почему TBB иногда избыточен и как сделать планировщик с гарантированным временем выполнения

Многие thread-пулы оптимизированы под динамический spawn и бесконечный backlog. В этой статье — подход для противоположного кейса: фиксированный DAG, один run и полный контроль над поведением

Статические DAG-графы: почему TBB иногда избыточен и как сделать планировщик с гарантированным временем выполнения

Проблема : Современные планировщики вроде TBB оптимизированы для динамических workload'ов, но в реальности до 40% случаев (игровые движки, оффлайн-рендеринг) используют фиксированные DAG-графы , где...

Oh boy, I have a lead! And it's NOT related to #TLS. I finally noticed another pattern: #swad only #crashed when running as a #daemon. The daemonizing wasn't the problem, but the default logging configuration attached to it: "fake async", by letting a #threadpool job do the logging.

Forcing THAT even when running in foreground, I can finally reproduce a crash. And I wouldn't be surprised if that was actually the reason for crashing "pretty quickly" with #LibreSSL (and only rarely with #OpenSSL), I mean, something going rogue in your address space can have the weirdest effects.

When a ThreadPoolExecutor in Java gets one task more than it can handle, it has policies how to proceed. Three policies throw away some task with an exception. One uses the calling thread to execute the task being submitted. There is no way to just block the calling task until the ThreadPoolExecutor has capacity again. This can be detrimental to overall throughput as this demo shows:

Now that #swad 0.7 is released, it's time to prepare a new release of #poser, my own lib supporting #services on #POSIX systems, following a #reactor with #threadpool design.

During development of swad, I moved poser from using strictly only POSIX APIs (with the scalability limits of e.g. #select) to auto-detected support for #kqueue, #epoll, #eventports, #signalfd and #timerfd (so now it could, in theory(!), "compete" with e.g. libevent). I also fixed quite some hidden bugs, and added more base functionality, like a #dictionary using nested hashtables internally, or #async tasks mimicking the async/await pattern known from e.g, #csharp. I also deprecated two features, the periodic and global "service tick" (superseded by individual timers) and the "resolve hosts" property of a "connection" (superseded by a separate resolve class).

I'll have to decide on a few things, e.g. whether I'll remove the deprecated stuff immediately and bump the major version of the "posercore" lib. I guess I'll do just that. I'd also like to add all the web-specific stuff (http 1.0/1.1 server) that's currently part of the swad code as a "poserweb" lib. This would get a major version of 0, indicating a generally unstable API/ABI as of now....

And then, I'd have to decide where certain utility classes belong to. The rate limiter is probably useful for things other than web, so it should probably go to core. What about url encoding/decoding, for example? 🤔

Stay tuned, something will come here, maybe helping you to write a nice service in plain #C 😎:

Revisiting #async / #await in #POSIX C, trying to "add some #security" 🙈

Recap: Consider a classic #reactor-style service in C with a #threadpool attached to run the individual request handlers. When such a handler needs to do some I/O, it'll have to wait for its completion, and doing so is kind of straight forward by just blocking the worker thread executing the job until whatever I/O was needed completes.

Now, blocking a thread is never a great thing to do and I recently tooted about an interesting alternative I found: Make use of the (unfortunately deprecated) POSIX user context switching to enable releasing the worker thread while waiting. In a nutshell, you create a context with #makecontext that has its own private #stack, and then you can use #swapcontext to get off the thread, and later again to get back on the thread. A minor issue is: It must be the *same* thread ... so you might have to wait until it completes something else before you can resume your job. But then, that's probably okayish, you can make sure in your job scheduling to only use worker threads with awaited tasks attached when no other thread is available.

In my first implementation, I just used #malloc to create a 64kiB private stack for each thread job. That's perfectly fine if you can guarantee your job will never consume more stack space, AND it won't have any vulnerabilities allowing some attacker to mess with the stack. But in practice, especially for a library offering this async/await implementation, it's nothing but a wild #CVE generator.

So, I now improved on that:

* Allocate a much larger stack of now 2MiB. That alone makes issues at least less likely. And on a sane modern OS, we can still assume pages will only be mapped "on demand".

* Only allocate the stack directly before running the thread job, and delegate allocation to some internal "stack manager" that keeps track of all allocated stacks and reuses them, only freeing them on exit. This should avoid most of the allocation overhead.

* If MAP_ANON / MAP_ANONYMOUS is available, use #mmap for allocating the stack. That at least gives a chance to stay away from other allocations ....

* But finally, if MAP_STACK is available, use this flag! From my research, #FreeBSD, #OpenBSD and #NetBSD will for example make sure there's at least one "guard page" below a stack mapped with this flag, so a stack overflow consistently takes the SIGSEGV emergency exit 😆. #Linux knows this flag as well, but doesn't seem to implement such protection at this time ... 🤔

Nice, #threadpool overhaul done. Removed two locks (#mutex) and two condition variables, replaced by a single lock and a single #semaphore. 😎 Simplifies the overall structure a lot, and it's probably safe to assume slightly better performance in contended situations as well. And so far, #valgrind's helgrind tool doesn't find anything to complain about. 🙃

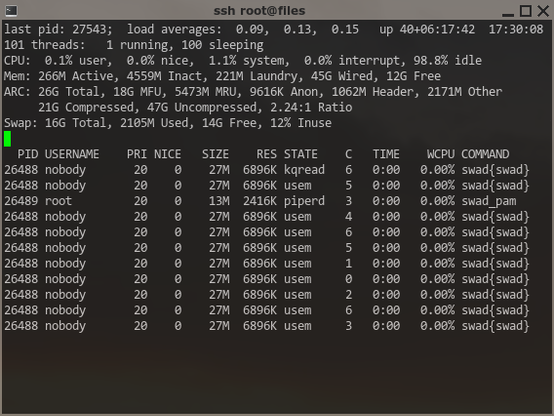

Looking at the screenshot, I should probably make #swad default to *two* threads per CPU and expose the setting in the configuration file. When some thread jobs are expected to block, having more threads than CPUs is probably better.

https://github.com/Zirias/poser/commit/995c27352615a65723fbd1833b2d36781cbeff4d

Still working on #swad, and currently very busy with improving quality, most of the actual work done inside my #poser library.

After finally supporting #kqueue and #epoll, I now integrated #xxhash to completely replace my previous stupid and naive hashing. I also added a more involved #dictionary class as an alternative to the already existing #hashtable. While the hashtable's size must be pre-configured and collissions are only ever resolved by storing linked lists, the new dictionary dynamically nests multiple hashtables (using different bits of a single hash value). I hope to achieve acceptable scaling while maintaining also acceptable memory overhead that way ...

#swad already uses both container classes as appropriate.

Next I'll probably revisit poser's #threadpool. I think I could replace #pthread condition variables by "simple" #semaphores, which should also reduce overhead ...

Fixed cancelling a thread job in #poser's #threadpool. Using a semaphore to do this seems reliable 😎

Oh my. #Multithreading, #synchronization, async #Unix #signals, this is pure "fun" ... 🙈

https://github.com/Zirias/poser/commit/aa4e02b728a549f0e3c4687750b90749d48fcfdc