#3goodthings (Work)

1. Had wonderful meetings w/ students. Their final paper drafts were beautifully-written w/authentic voices & profound insight. No #AI.

2. Had an introductory meeting w/a Stanford #neurologist w/whom I'll be discussing my #memoir @ the #Stanford Health Library. She's the perfect interlocutor (MA in #NarrativeMedicine, too). More info. about the 6.23 event when the flyer is ready.

3. Attended a #UCSF salon on Courage followed by debrief w/coffee (natch) w/friend.

https://blazetrends.com/stanfords-bold-ai-for-mental-health-symposium-sparks-urgent-call-for-purpose-built-therapy-tech/?fsp_sid=25061

https://law.stanford.edu/press/ai-outperforms-law-professors-in-stanford-law-study/ #Forbidden #Tech #Humor #Legal #Tech #HackerNews #ngated

AI Outperforms Law Professors in Stanford Law Study

https://law.stanford.edu/press/ai-outperforms-law-professors-in-stanford-law-study/

#HackerNews #AI #Law #Study #Stanford #Outperform #Professors #LegalTech

Times of India | An English literature graduate Daniela Amodei on leaving OpenAI to found Anthropic: Looked crazy thing to do, but …

AI generated summary, Read the full article for complete information.

Anthropic co‑founder Daniela Amodei, an English literature graduate who previously worked at Stripe and rose to vice‑president at OpenAI, says she left OpenAI in late 2020 after a mentor reminded her that she already knew the right answer, prompting her and her brother Dario to launch Anthropic in 2021. Since then the startup has become a major rival to OpenAI, overtaking it as the world’s most valuable AI company with a $965 billion valuation following a $65 billion Series H financing round led by Altimeter Capital, Dragoneer, Greenoaks and Sequoia, and reporting a $47 billion revenue run rate largely fueled by its Claude Code AI coding assistant. Amodei also advises aspiring entrepreneurs to test co‑founder chemistry by taking a vacation together before founding a company, noting that extended off‑work time reveals whether a partnership can survive the pressures of building a startup.

An English literature graduate Daniela Amodei on leaving OpenAI to found Anthropic: Looked crazy thing to do, but …

AI giant Anthropic co-founder Daniela Amodei has spoken candidly about the difficult decision to leave OpenAI in late 2020, describing it as “kind of a crazy thing to do.” As reported by Benzinga, recently speaking at Stanford Graduate School of Business, Amodei recalled the uncertainty she and her brother Dario Amodei faced weighing whether to continue working at OpenAI to strike out on their own. Amodei said that the turning point came during a conversation with a trusted mentor, who told her: “Honestly, I don’t think you really need to be on the phone with me.

AI Agent Guidelines for CS336 at Stanford

https://github.com/stanford-cs336/assignment1-basics/blob/main/CLAUDE.md

#HackerNews #AI #Agent #Guidelines #CS336 #Stanford #MachineLearning #StanfordUniversity #TechEducation

RT @ollama: OpenJarvis: ein lokaler, auf den Nutzer ausgerichteter persönlicher KI-Assistent ist jetzt mit Ollama verfügbar. Entwickelt von den Labors @HazyResearch und Scaling Intelligence der Stanford University im Rahmen ihrer Forschung „Intelligence Per Watt“ zu effizienter lokaler KI. @Stanford Mehr erfahren im Blogbeitrag 👇👇👇

mehr auf Arint.info

#EffizienteKI #HazyResearch #LokaleKI #Ollama #OpenJarvis #Stanford #arint_info

Arint - SEO+KI (@[email protected])

<p>RT @ollama: OpenJarvis: ein lokaler, auf den Nutzer ausgerichteter persönlicher KI-Assistent ist jetzt mit Ollama verfügbar. Entwickelt von den Labors @HazyResearch und Scaling Intelligence der Stanford University im Rahmen ihrer Forschung „Intelligence Per Watt“ zu effizienter lokaler KI. @Stanford Mehr erfahren im Blogbeitrag 👇👇👇</p> <p><a href="https://arint.info/@Arint/116668608700839998">mehr</a> auf <a href="https://arint.info/">Arint.info</a></p> <p>#EffizienteKI #HazyResearch #LokaleKI #Ollama #OpenJarvis #Stanford #arint_info</p> <p><a href="https://x.com/ollama/status/2060428074102206496#m">https://x.com/ollama/status/2060428074102206496#m</a></p>

RT @ollama: OpenJarvis: ein lokaler, auf Privatsphäre ausgerichteter persönlicher KI-Assistent ist jetzt mit Ollama verfügbar. Entwickelt von den @HazyResearch- und Scaling-Intelligence-Labors der Stanford University im Rahmen ihrer „Intelligence Per Watt“-Forschung zu effizienter lokaler KI. @Stanford Mehr erfahren im Blogbeitrag 👇👇👇

mehr auf Arint.info

#EffizienteKI #IntelligencePerWatt #LokaleKI #Ollama #OpenJarvis #Stanford #arint_info

Arint - SEO+KI (@[email protected])

<p>RT @ollama: OpenJarvis: ein lokaler, auf Privatsphäre ausgerichteter persönlicher KI-Assistent ist jetzt mit Ollama verfügbar. Entwickelt von den @HazyResearch- und Scaling-Intelligence-Labors der Stanford University im Rahmen ihrer „Intelligence Per Watt“-Forschung zu effizienter lokaler KI. @Stanford Mehr erfahren im Blogbeitrag 👇👇👇</p> <p><a href="https://arint.info/@Arint/116661532043459753">mehr</a> auf <a href="https://arint.info/">Arint.info</a></p> <p>#EffizienteKI #IntelligencePerWatt #LokaleKI #Ollama #OpenJarvis #Stanford #arint_info</p> <p><a href="https://x.com/ollama/status/2060428074102206496#m">https://x.com/ollama/status/2060428074102206496#m</a></p>

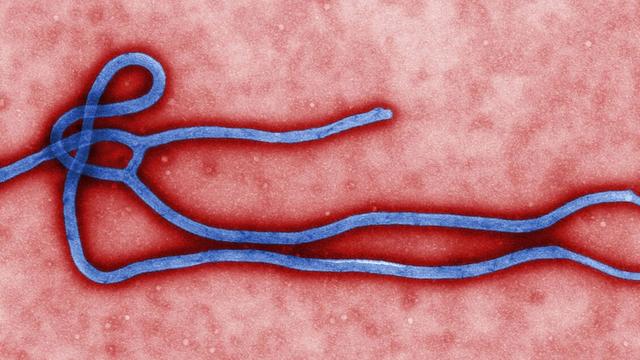

Primo piano ANSA - ANSA.it: Ebola, i cinque punti cruciali dell'epidemia

Virus Bundibugyo è raro, come i test. Barry (Stanford), collaborare per difendersi

Ebola, the five key points of the epidemic

Bundibugyo virus is rare, as are the tests. Barry (Stanford), let’s collaborate to defend ourselves.