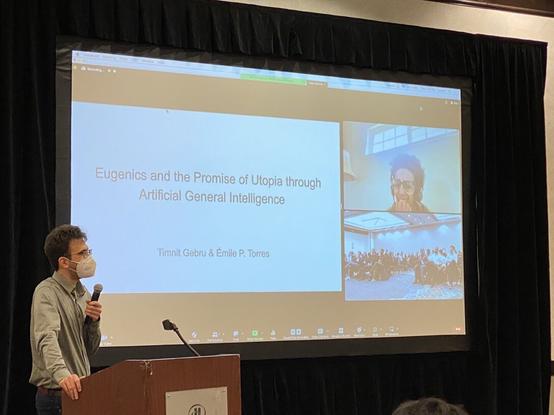

On the bird site, @nicolaspapernot posted a nice summary of @timnitGebru #SaTML thought-provoking keynote "Eugenics and the Promise of Utopia through Artificial General Intelligence". Video will become available online at conference web site.

My main take away: targeting (promising) AGI is inherently dangerous -- we should focus on "well-scoped systems" instead.

https://twitter.com/NicolasPapernot/status/1623885631930658816