Perplexity (@perplexity_ai)

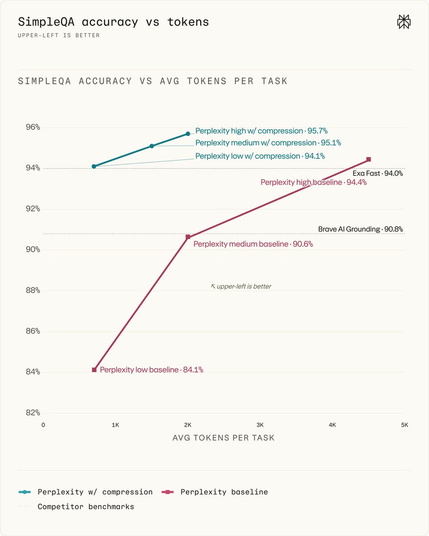

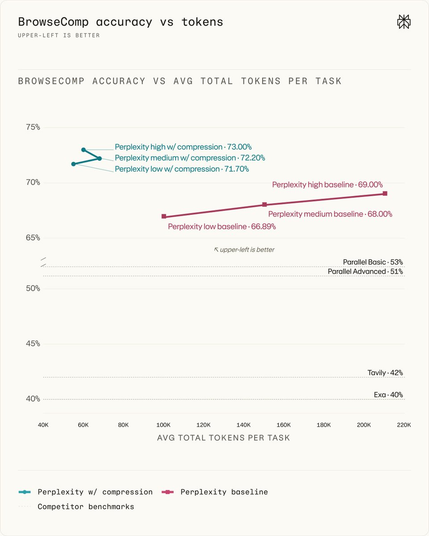

검색 입력에서 광고, 내비게이션, 메타데이터, 불필요한 콘텐츠를 제거해 더 높은 신호 밀도를 만든 사례입니다. snippet당 핵심 콘텐츠 비율이 63% 증가했고, SimpleQA에서 50배 압축비와 frontier급 성능을 달성했다고 합니다. 검색/리랭킹/컨텍스트 구성 최적화에 유용한 벤치마크입니다.

Perplexity (@perplexity_ai)

쿼리 인지형 압축(query-aware compression)을 프로덕션에 적용해 검색 품질과 속도를 개선했다는 소식입니다. 컨텍스트 토큰을 최대 70% 줄이면서 답변 품질은 향상시켰다고 밝혔습니다. RAG/검색 시스템에서 컨텍스트 최적화에 바로 참고할 만한 실무적 인사이트입니다.

https://www.youtube.com/watch?v=RXvo0wBeWlQ

Compute Optimal Tokenization: Scaling Laws for Data Compression in LLMs

Meta AI 연구진이 발표한 논문에서, LLM 학습 시 토크나이저의 데이터 압축률이 모델 성능과 스케일링 법칙에 미치는 영향을 분석했다. 주요 발견은 (1) 최적의 데이터 바이트 대비 파라미터 비율은 일정하며, (2) 학습 예산이 커질수록 최적 압축률은 낮아지고, (3) 이 최적 압축률과 비율은 언어별로 다르며 기존 BPE 토크나이저와 차이가 있다는 점이다. 이 연구는 토크나이저 설계와 데이터 준비 단계에서 압축률 조절이 모델 효율성 향상에 중요함을 시사한다.

Make ZIP files smaller with ZIP Shrinker

https://evanhahn.com/make-zip-files-smaller-with-zip-shrinker/

A cheap fix that saves the AI $400M dollars a year and brings 4B people online

Codec는 AI 추론 파이프라인에서 토큰 ID를 텍스트로 변환하는 중복 작업을 제거해 네트워크 대역폭과 CPU 사용량을 획기적으로 줄이는 프로토콜이다. 이를 통해 AI 서비스의 통신 비용을 연간 약 4억 달러 절감하고, 모바일 환경에서 최대 1700배까지 데이터 전송량을 줄여 50억 명 이상의 사용자에게 AI 접근성을 제공한다. 주요 AI 플랫폼과 호환되며, 기존 코드 변경 없이 쉽게 도입 가능해 AI 인프라 운영 비용과 지연 시간을 크게 낮출 수 있다.

#aiinference #compression #networkoptimization #middleware #costreduction

New tool: upload a ZIP file, get a smaller ZIP file back. Primarily relies on better Deflate compression, but also has a few small tricks to save bytes. https://evanhahn.com/uploads/2026-05-16-zip-shrinker/

Read more here: https://evanhahn.com/make-zip-files-smaller-with-zip-shrinker/

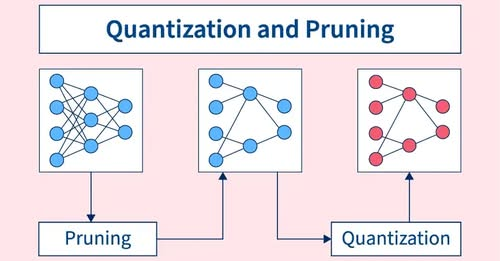

#ITByte: #Model #Compression is a critical step towards making large, powerful #MachineLearning models more practical, efficient, and widely deployable across a diverse range of applications and hardware platforms.

https://knowledgezone.co.in/posts/Machine-Learning-Model-Compression-6824b3f68eac05b3479e557c