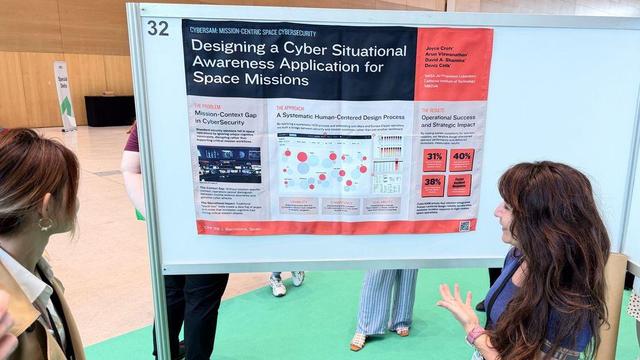

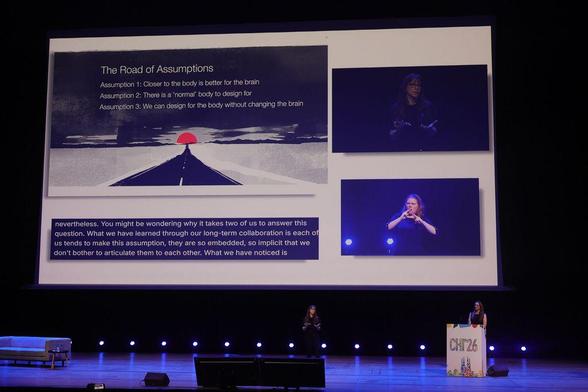

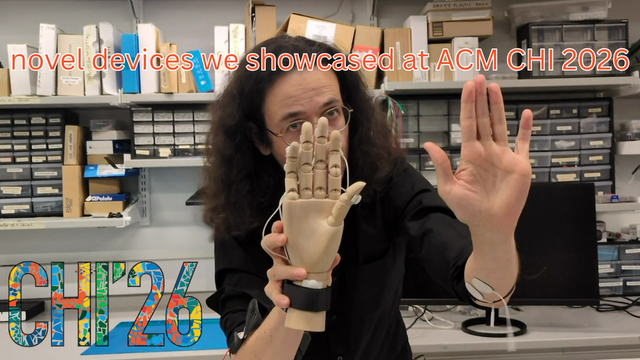

Huge thanks to all who made #CHI2026 a success! To fantastic participants, organizers, sponsors, exhibitors, volunteers, and attendees: Your contributions made it unforgettable. See you next year in Pittsburgh! 🚀

#ThankYou #researchlife #computing #barcelona #ACMSIGCHI

Photo credit: CHI 2026