What's your favorite Python SEO crawler?

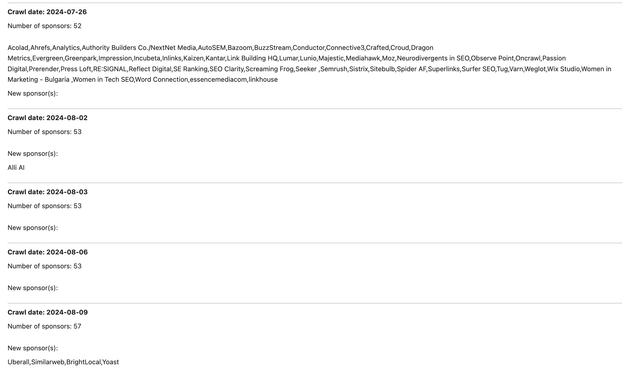

According to The Google, there seems to be one dominant option.

python3 -m pip install advertools

If you dig deeper, you'll find two other crawlers:

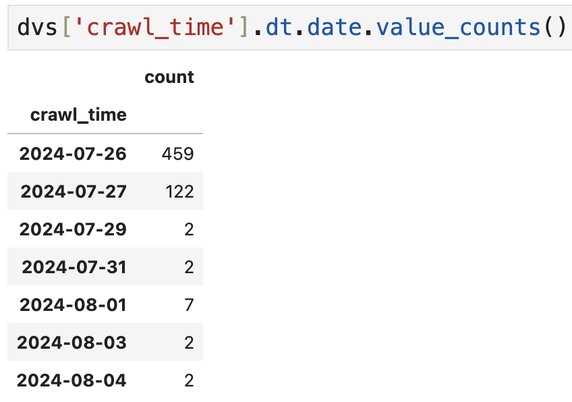

- One for status codes and all response headers

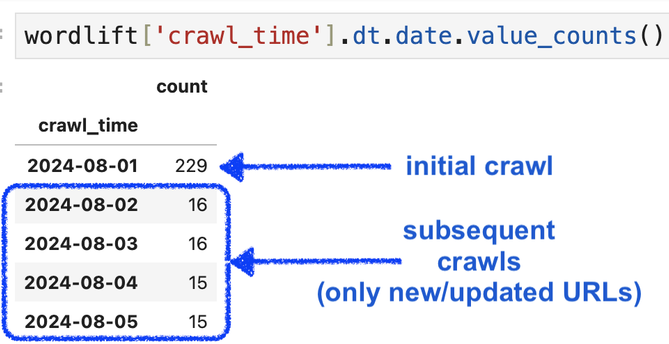

- One for downloading images from a list of URLs