AACL-IJCNLP 2022 is over and it was a pleasure to attend

virtually - despite the painfully noticeable time difference.

Our paper "The Lifecycle of "Facts": A Survey of Social Bias in Knowledge Graphs" (co-author Ricardo Usbeck) is now available in the proceedings:

The Lifecycle of “Facts”: A Survey of Social Bias in Knowledge Graphs

Angelie Kraft, Ricardo Usbeck. Proceedings of the 2nd Conference of the Asia-Pacific Chapter of the Association for Computational Linguistics and the 12th International Joint Conference on Natural Language Processing. 2022.

"Some Languages are More Equal than Others: Probing Deeper into the Linguistic Disparity in the NLP World"

https://arxiv.org/abs/2210.08523

A very strong paper with important analyses. Highly recommended read.

🧵based on talk by

Surangika Ranathunga

at Linguistic Diversity track

#aacl2022

Some Languages are More Equal than Others: Probing Deeper into the Linguistic Disparity in the NLP World

Linguistic disparity in the NLP world is a problem that has been widely acknowledged recently. However, different facets of this problem, or the reasons behind this disparity are seldom discussed within the NLP community. This paper provides a comprehensive analysis of the disparity that exists within the languages of the world. We show that simply categorising languages considering data availability may not be always correct. Using an existing language categorisation based on speaker population and vitality, we analyse the distribution of language data resources, amount of NLP/CL research, inclusion in multilingual web-based platforms and the inclusion in pre-trained multilingual models. We show that many languages do not get covered in these resources or platforms, and even within the languages belonging to the same language group, there is wide disparity. We analyse the impact of family, geographical location, GDP and the speaker population of languages and provide possible reasons for this disparity, along with some suggestions to overcome the same.

Toot thread from the #fairness in #NLProc panel from #aacl2022

Panelists: Monojit Choudhury, Sunipa Dev, Alice Oh, @isa

(Sorry, I dont have all Mastodon handles here, please tag them if you know theirs.)

Moderator: Mona Diab

--

Q: Perspectives on definitions of fairness

Alice: We do not have a general definitions of fairness. Current metrics do not take the variations of geo-cultural variables.

Sunipa: context/use-case is imp. Flip the Q: instead of is it fair, ask what harm can it cause.

Dodging the Data Bottleneck: Automatic Subtitling with Automatically Segmented ST Corpora

Speech translation for subtitling (SubST) is the task of automatically translating speech data into well-formed subtitles by inserting subtitle breaks compliant to specific displaying guidelines. Similar to speech translation (ST), model training requires parallel data comprising audio inputs paired with their textual translations. In SubST, however, the text has to be also annotated with subtitle breaks. So far, this requirement has represented a bottleneck for system development, as confirmed by the dearth of publicly available SubST corpora. To fill this gap, we propose a method to convert existing ST corpora into SubST resources without human intervention. We build a segmenter model that automatically segments texts into proper subtitles by exploiting audio and text in a multimodal fashion, achieving high segmentation quality in zero-shot conditions. Comparative experiments with SubST systems respectively trained on manual and automatic segmentations result in similar performance, showing the effectiveness of our approach.

Heng Ji @elgreco_winter on Twitter writes:

"This public debate is happening today 6pm ET!

“Is there more to NLP than Deep Learning?"

-Moderator: Rada Mihalcea

-Yes: Eduard Hovy (Lead), Kathleen McKeown, Dan Roth, Eric Xing

-No: Kyunghyun Cho (Lead), Danqi Chen, Manling Li, Graham Neubig

Zoom link: tinyurl.com/25bar2w4 "

And I guess I'm on side ""Why is the question even framed this way?"

@shaily99 hey, nice to see there are more people on Mastodon talking about #aacl2022 :)) (though it seems we're only a few people so far)

I think the right word is "tooting"!

There is no "quote-toot", apparently the right way is to just reply to toots instead! :) https://mastodon.social/@Gargron/99662106175542726

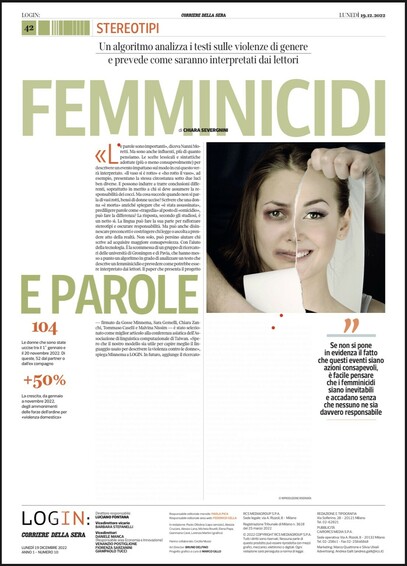

Super excited to be at #aacl2022 in virtual Taipei! Starting early this morning with my presentation together with @saranonpiangere, Chiara Zanchi, @tommaso_caselli and Malvina Nissim

Find our paper "Dead or Murdered? Predicting Responsibility Perception in Femicide News Reports" at https://arxiv.org/abs/2209.12030

Dead or Murdered? Predicting Responsibility Perception in Femicide News Reports

Different linguistic expressions can conceptualize the same event from different viewpoints by emphasizing certain participants over others. Here, we investigate a case where this has social consequences: how do linguistic expressions of gender-based violence (GBV) influence who we perceive as responsible? We build on previous psycholinguistic research in this area and conduct a large-scale perception survey of GBV descriptions automatically extracted from a corpus of Italian newspapers. We then train regression models that predict the salience of GBV participants with respect to different dimensions of perceived responsibility. Our best model (fine-tuned BERT) shows solid overall performance, with large differences between dimensions and participants: salient _focus_ is more predictable than salient _blame_, and perpetrators' salience is more predictable than victims' salience. Experiments with ridge regression models using different representations show that features based on linguistic theory similarly to word-based features. Overall, we show that different linguistic choices do trigger different perceptions of responsibility, and that such perceptions can be modelled automatically. This work can be a core instrument to raise awareness of the consequences of different perspectivizations in the general public and in news producers alike.