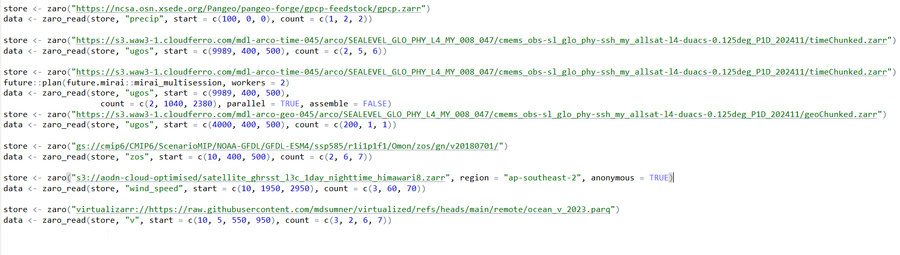

Do you work with Zarr data? The `pizzarr` package has just been published on CRAN! This is an R implementation for creating, reading, and writing chunked Zarr arrays, developed by David Blodgett and Mark Keller. It supports both Zarr V2 & V3 and efficient slicing of large N-dimensional arrays, making it perfect for handling massive datasets.

GitHub repository: https://github.com/zarr-developers/pizzarr

file and hoped

file and hoped  can open it in

can open it in

dataset

dataset