Pop-Up Sale!

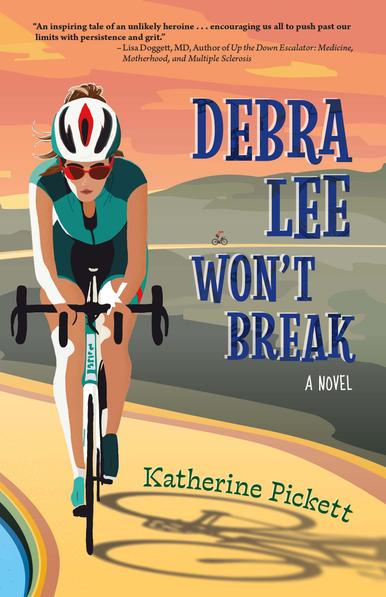

Get 30% off the print edition of Debra Lee Won't Break by Katherine Pickett

Today only!

“Hooked me from the first page. . . . [Pickett] has created a protagonist with grit, resilience, and a heart so big that it beats through every page of this well-crafted novel.”

—Michelle Brafman, author of Swimming with Ghosts and Draw Near to Me

Scan the QR code to get the discount!

Or click here: https://shop.ingramspark.com/b/084?params=TlFH20PZhEFzUwomjlLaJQsA9lM0Xsuxxua3oPw23EP