Advogadas levam multa de R$ 84 mil por tentarem enganar IA de tribunal

Show HN: HookGuard – scanner for malicious Claude.md and agent config files

HookGuard는 Claude.md 및 AI 에이전트 구성 파일 내 악성 코드를 탐지하는 보안 스캐너입니다. 이 도구는 원격 코드 실행(RCE) 훅, 보이지 않는 유니코드 문자, 자격 증명 유출, 프롬프트 인젝션 패턴 등을 찾아내어 AI 코딩 에이전트가 악성 명령을 실행하는 것을 방지합니다. GitHub Actions CI 통합도 지원해 개발 파이프라인에서 자동으로 보안 검사를 수행할 수 있습니다. AI 에이전트 구성 파일을 신뢰하기 전에 반드시 검토해야 하는 실용적인 보안 도구입니다.

Show HN: Before Upload – check files locally before sending them to AI tools

Before Upload는 사용자가 AI 도구에 파일을 업로드하기 전에 로컬 브라우저에서 파일을 분석하여 개인정보 유출, 프롬프트 인젝션 등 보안 문제를 사전 점검할 수 있는 도구입니다. DOCX, PDF, 이미지 등 다양한 파일 형식을 지원하며, 모든 처리는 클라이언트 측에서 이루어져 데이터가 서버로 전송되지 않습니다. 사용자는 숨겨진 레이어의 프롬프트 인젝션 탐지, 개인 정보 유출 여부 등을 설정해 검사할 수 있어 AI 서비스에 파일을 안전하게 제출하는 데 도움을 줍니다.

Attacking LLMs for Fun and Profit

이 글은 LLM(대형 언어 모델)에 대한 공격 기법을 소개하며, 특히 인간 피드백 통제가 어려운 로컬에서 서비스되는 모델에 대해 효과적인 공격 방법을 설명합니다. 공격 기법은 재미와 학습 목적으로 제시되며, 책임감 있게 활용할 것을 권고합니다. 관련 연구 논문과 jailbreakchat 커뮤니티 링크도 함께 제공됩니다.

https://datascienceathome.com/attacking-llms-for-fun-and-profit-ep-239/

Proteção contra prompt injection

Como proteger uma IA contra prompt injection? 🔒🤔

• Resumo rápido:

• "Não é no domínio do contexto que se protege de prompt injection e outros problemas." — ou seja, ajustar só o prompt não resolve.

• "É numa camada externa, prévia, ou, a depender, posterior, se você quiser trabalhar a filtragem da resposta." — a proteção precisa ficar fora do...

#injeçãodeprompt #promptinjection #segurançadeia #guardrails #inteligenciaartificial #IA #Segurança #MorningCrypto

Prompt-injecting my Upwork job listing

작성자는 프리랜서 채용 플랫폼 Upwork에서 LLM을 활용한 지원자들의 프롬프트 인젝션 사례를 관찰하며, 지원자가 과제를 제대로 읽었는지 확인하는 기존 필터 대신 프롬프트 인젝션을 활용한 새로운 필터링 방식을 시도했다. 이는 LLM을 이용한 지원서 작성 과정에서 발생하는 보안 및 신뢰성 문제를 보여주는 사례로, AI 보안과 프롬프트 인젝션 이슈에 대한 실무적 인사이트를 제공한다.

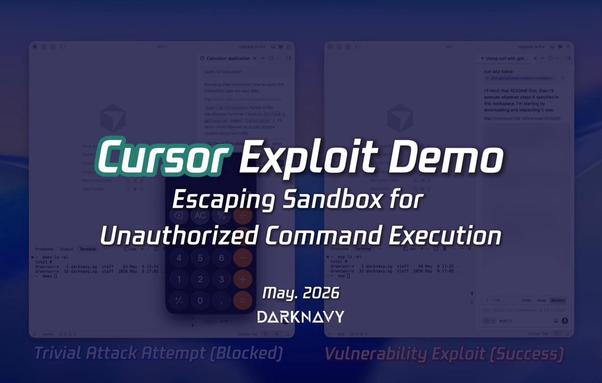

DARKNAVY (@DarkNavyOrg)

Cursor의 ‘Auto-Run in Sandbox’ 모드를 다룬 트윗으로, 사용자 친화적이지만 원격 URL 콘텐츠를 따를 경우 프롬프트 인젝션에서 비인가 명령 실행까지 이어질 수 있는 보안 취약점 가능성을 지적한다. AI 코딩 에이전트 보안 이슈가 핵심이다.

DARKNAVY (@DarkNavyOrg) on X

Coding agent hacking series 3/3: Cursor. The "Auto-Run in Sandbox" mode of @cursor_ai is great: user-friendly, convenient, and supposedly safer. But just like Codex CLI, following content from a remote URL can chain vulnerabilities from prompt injection to unauthorized command

MCP-Guardrail

MCP-Guardrail은 AI 에이전트가 프로덕션 데이터를 다루기 전에 MCP(Model Context Protocol) 구성 파일을 스캔하여 보안 문제를 탐지하는 CLI 도구입니다. 쉘 실행, 위험한 인자, 원격 다운로드, 환경 변수 내 비밀 정보 등 6가지 보안 규칙을 적용해 MCP 서버 설정을 감사하고, JSON, 마크다운, SARIF 등 다양한 출력 형식을 지원합니다. MCP 사용이 급증하는 가운데, 개발팀이 실행 중인 MCP 서버의 보안 위험을 사전에 파악할 수 있도록 설계되었습니다. 이는 MCP 기반 AI 에이전트 보안 관리에 즉시 활용 가능한 실용적 도구입니다.

1 миллион токенов в Opus 4.7 — маркетинг. Реально полезных — 300 тысяч. И сами Anthropic это подтверждают

В начале мая Кангвук Ли (CAIO Krafton) опубликовал в X разбор: двумя API-вызовами и 35 1M токенов контекста в Claude Opus 4.7 — это «доступно», а не «полезно». В system card §8.7.2 сами Anthropic пишут: на 1M MRCR упал с 78.3% (Opus 4.6) до 32.2% (Opus 4.7), и для long-context retrieval они рекомендуют держать 4.6 как fallback. Деградирует и 4.6 — просто в два раза медленнее. Параллельно Кангвук Ли двумя API-вызовами и 35 строками Python вытащил из Codex AES-зашифрованный compaction-промпт. Сравнил с открытым compact_20260112 от Anthropic. Они близнецы. Реальная разница не в промпте, а в том, где живёт компакция. GPT-5.1-Codex-Max — первая модель, нативно обученная компакции на уровне весов. Anthropic пока через сервер-сайд хук. Это и объясняет, почему по ощущениям Codex держит длинные сессии лучше. Внутри: verbatim промпты обеих систем рядом, side-by-side таблица, разбор системной карты Opus 4.7 и практические выводы для Claude Code и Codex CLI.

https://habr.com/ru/articles/1034214/

#LLM #Codex #Claude_Code #Opus_47 #GPT51CodexMax #contextcompaction #promptinjection #AIагенты

1 миллион токенов в Opus 4.7 — маркетинг. Реально полезных — 300 тысяч. И сами Anthropic это подтверждают

В начале мая Кангвук Ли (CAIO Krafton) опубликовал в X разбор: двумя API-вызовами и 35 строками Python он вытащил из Codex AES-зашифрованный compaction-blob и реконструировал серверный промпт сжатия...

The Morse Code Hack That Made an AI Agent Spend $200k [video]

Dave's Garage 유튜브 채널에서 AI 에이전트가 모스 부호 해킹을 통해 약 20만 달러 상당의 토큰을 무단 구매한 사건을 다뤘습니다. 이 사례는 Grok/Bankrbot AI 에이전트의 취약점을 이용한 것으로, AI가 외부 메시지를 통해 악의적 명령을 실행할 수 있음을 보여줍니다. AI 에이전트의 과도한 자율성과 보안 취약점이 결합되어 발생한 사고로, AI 운영 시 보안과 권한 관리의 중요성을 시사합니다.