#ai #ml #neuroscience #computationalneuroscience #interpretability #NeuralRepresentations #neurips2023

#ai #ml #neuroscience #computationalneuroscience #interpretability #NeuralRepresentations #neurips2023

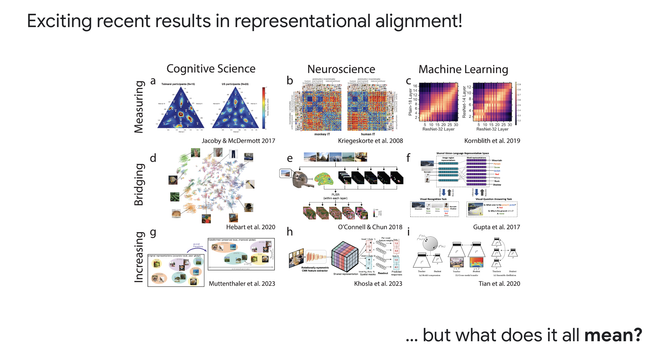

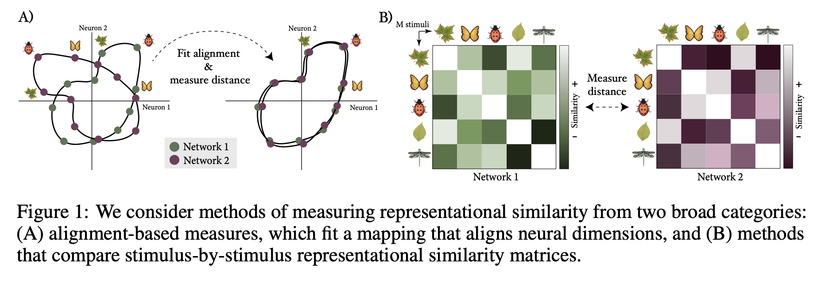

How to measure (dis)similarity between #NeuralRepresentations? This work by Harvey et al. (2023) ( @ahwilliams lab) illuminates the relation between #CanonicalCorrelationsAnalysis (#CCA), shape distances, #RepresentationalSimilarityAnalysis (#RSA), #CenteredKernelAlignment (#CKA), and #NormalizedBuresSimilarity (#NBS):

Duality of Bures and Shape Distances with Implications for Comparing Neural Representations

A multitude of (dis)similarity measures between neural network representations have been proposed, resulting in a fragmented research landscape. Most of these measures fall into one of two categories. First, measures such as linear regression, canonical correlations analysis (CCA), and shape distances, all learn explicit mappings between neural units to quantify similarity while accounting for expected invariances. Second, measures such as representational similarity analysis (RSA), centered kernel alignment (CKA), and normalized Bures similarity (NBS) all quantify similarity in summary statistics, such as stimulus-by-stimulus kernel matrices, which are already invariant to expected symmetries. Here, we take steps towards unifying these two broad categories of methods by observing that the cosine of the Riemannian shape distance (from category 1) is equal to NBS (from category 2). We explore how this connection leads to new interpretations of shape distances and NBS, and draw contrasts of these measures with CKA, a popular similarity measure in the deep learning literature.

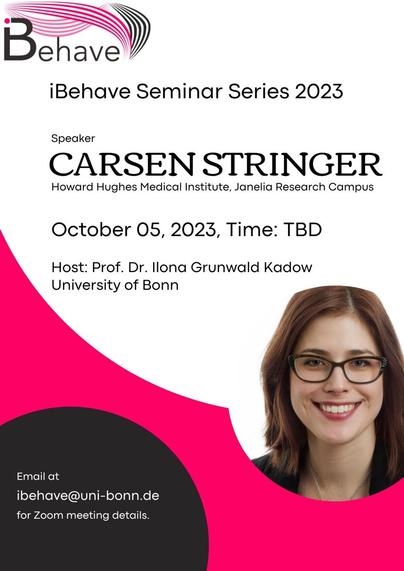

Looking very much forward to the upcoming #iBehave seminar with Carsen Stringer ( @computingnature ) on “Unsupervised pretraining of #NeuralRepresentations for #TaskLearning” 👌

⏰ October 06, 2023, at 12 pm

📍 online

🌏 https://ibehave.nrw/news-and-events/ibehave-seminar-series-talk-by-dr-carsen-stringer/

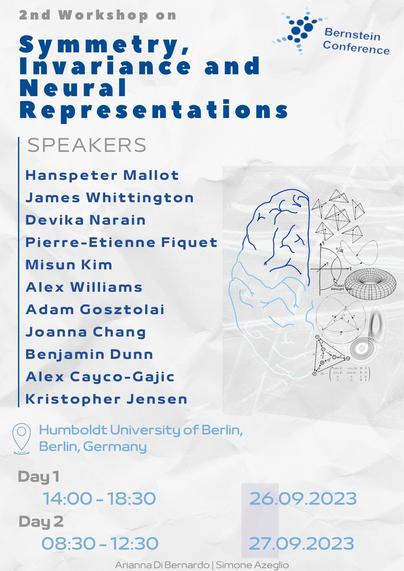

Looking forward to tomorrow’s 2nd workshop on #symmetry, invariance and #NeuralRepresentations at the #BernsteinConference: #GroupTheory, #manifolds, and #Euclidean vs #nonEuclidean #geometry #perception … I’m pretty excited 🤟😊

#CompNeuro #computationalneuroscience