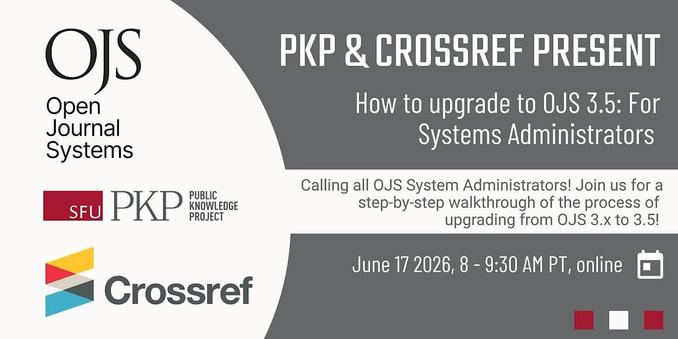

🤝 PKP and Crossref continue to join forces – this time to provide for folks who wish to upgrade to OJS 3.5. Upgrading means not only will you be more a part of the scholarly publishing ecosystem, but you will have more journal stability, security, and workflow efficiency. Join us to learn more! https://bit.ly/4ab9baF

#OpenJournalSystems #OpenAccess #DiamondOpenAccess #ScholComm #ScholarlyPublishing #AcademicChatter #Metadata #DOIs #JournalManagers #JournalEditors #ojsSystemAdmins