Range-Based Set Reconciliation via Range-Summarizable Order-Statistics Stores

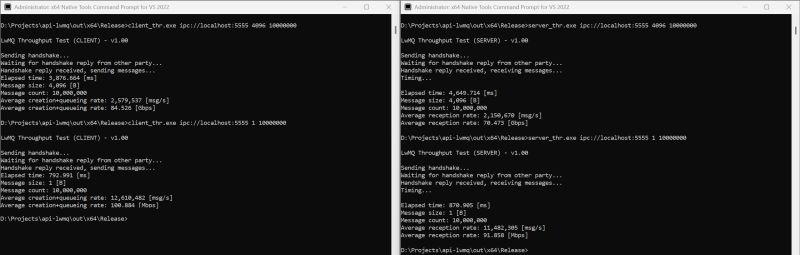

Range-Based Set Reconciliation (RBSR) synchronizes ordered sets by recursively comparing summaries of contiguous ranges and refining only the mismatching parts. While its communication complexity is well understood, its local computational cost fundamentally depends on the storage backend

we introduce AELMDB, an extension of #LMDB that realizes this design