For $PROJECT I am wondering how sparse a bitmap needs to be before it's worth looking at alternatives.

Say I have a 32-bit random seed and it produces a tuple (x_1, x_2, ..., x_n) of attributes through some process we want to analyze. What I'd like is to build an index that lets me identify seed values with, say, x_1=4, or quickly intersect several indexes to find a seed value with x_1=4 and x_2 = 13 and x_3 = 5.

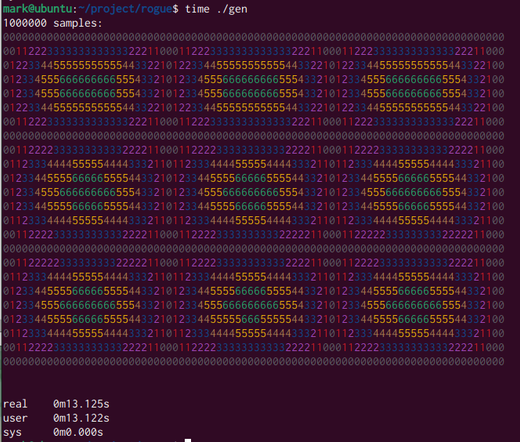

If the domain of x_i is small (say, 16 different values) then we could use a bunch of bitmaps -- they're only 512MiB each. Each bitmap will be only 6% populated, is that enough to consider a fancier representation that compresses the bitmap?

I've read "Searchable compressed representations of very sparse bitmaps" https://www.stevenpigeon.com/Publications/publications/SparseBitmap.pdf but my feeling right now is this isn't a good fit.

There are a bunch of ideas of the form "index a collection of containers, which may be arrays or compressed arrays or bitmaps" which probably only work well when the set is not very evenly distributed.