What the Air Force doesn’t want us to notice on election night

https://www.counterpunch.org/2024/11/05/what-the-air-force-doesnt-want-us-to-notice-on-election-night

reposted: https://www.nationofchange.org/2024/11/05/what-the-air-force-doesnt-want-us-to-notice-on-election-night

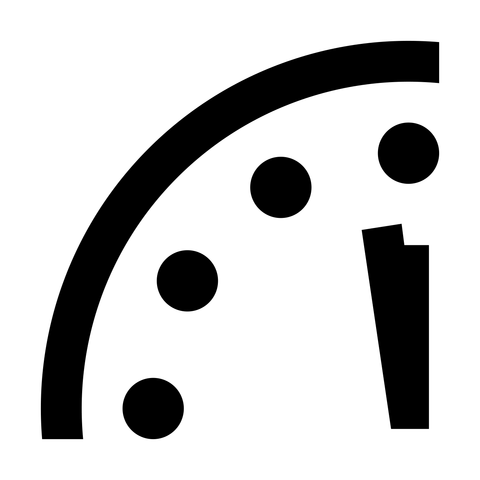

* while everyone’s attention on U.S. pres. election, U.S. Air Force will test-launch an Intercontinental Ballistic Missile w. dummy hydrogen bomb

* does this several times a year

* launches are always at night while Americans sleeping

#capitalism #militarism #MIC #MilitaryIndustrialComplex #NuclearWeapons #ExistentialRisks #ICBM #USAF