古の刃 Стародавні клинки|ポイズン雷花

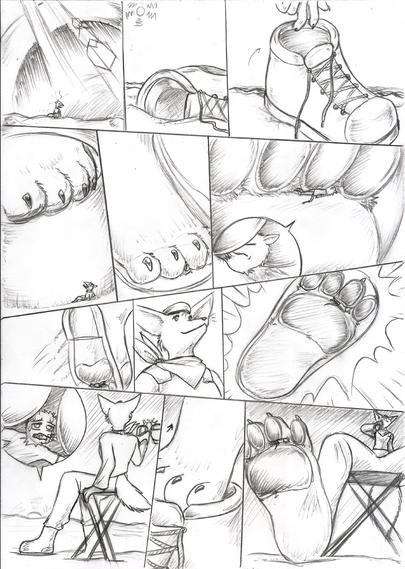

古に生まれし刃 我の心を鍛えよう 限無き野心を 打ち砕く剣と成りたまえ 志を共にする民よ 我に付いたまえ 安寧の地を この手取戻す為!! <> Клинки, народжені ще в давнину Тренуйте свій розум Безмежні амбіції Будь розбитим мечем Однодумці, Іди за мною Місце спокою Щоб повернути це в руки! !! <> Blades born in ancient times Train Your Mind Limitless

古の刃 Стародавні клинки|ポイズン雷花

古に生まれし刃 我の心を鍛えよう 限無き野心を 打ち砕く剣と成りたまえ 志を共にする民よ 我に付いたまえ 安寧の地を この手取戻す為!! <> Клинки, народжені ще в давнину Тренуйте свій розум Безмежні амбіції Будь розбитим мечем Однодумці, Іди за мною Місце спокою Щоб повернути це в руки! !! <> Blades born in ancient times Train Your Mind Limitless

#KPopMonday #KRnB

No #KRnB list is complete without Crush .. his latest EP is amaze. Plus .. Sumin!

Crush - UP ALL NITE (Feat. SUMIN)ㅣ소수빈 ➡ Crushㅣ#라이브와이어 11화 | Mnet 250829 방송

https://www.youtube.com/watch?v=ovOmPW3PutI

#LiveWire #Crush #Sumin

🔗Crush - UP ALL NITE(Feat. SUMIN)ㅣ소수빈 ➡ Crushㅣ#라이브와이어 11화 | Mnet 250829 방송