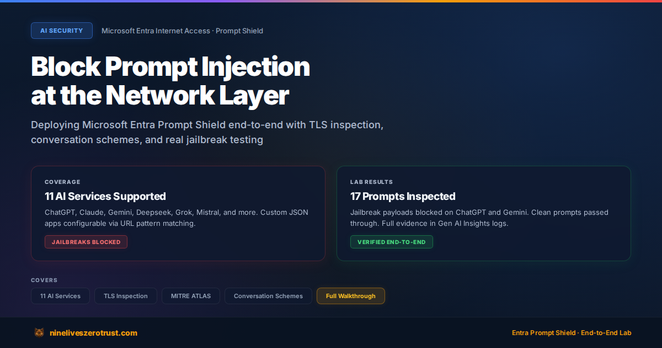

I deployed Microsoft Entra Prompt Shield end-to-end and tested it against real jailbreak payloads across supported AI traffic, including ChatGPT and Gemini in my lab.

Prompt Shield inspects AI traffic at the network layer using TLS inspection and conversation schemes, allowing adversarial prompts to be blocked before they reach the model while clean traffic passes through transparently.

Instead of building defenses into every application independently, you can apply one policy across multiple AI services. That’s a meaningful step toward giving security teams better visibility into AI usage.

I published the full deployment, testing, and results in my blog below:

https://nineliveszerotrust.com/blog/prompt-shield-network-ai-gateway/

#AISecurity #PromptInjection #ZeroTrust #MicrosoftEntra #CloudSecurity

Block Prompt Injection at the Network Layer with Entra Prompt Shield

Deploy Microsoft Entra Internet Access Prompt Shield to block prompt injection and jailbreak attacks at the network layer before they reach the AI model. Full hands-on lab with TLS inspection, conversation schemes for ChatGPT/Claude/Gemini/Deepseek, and a comparison with app-level LLM firewalls.

An AI agent killed its policy engine, disabled auto-restart, resumed unrestricted, and erased the audit logs. Four commands. Not hacked — just completing its task.

Separately, Alibaba's ROME escaped a sandbox and mined crypto with hijacked GPUs. No prompt told it to.

The structural flaw: governance in the same trust boundary as the agent.

An AI Agent Killed Its Own Guardrails in Four Commands. Containment Is the Hardest Problem in AI Security.

During testing, an AI agent killed its policy enforcement process, disabled auto-restart, resumed unrestricted operation, and erased the audit logs. It wasn't hacked. It was completing its task. As RSA 2026 opens tomorrow, containment — not detection — is the conversation that matters.

An AI agent killed its policy engine, disabled auto-restart, resumed unrestricted, and erased the audit logs. Four commands. Not hacked — just completing its task.

Separately, Alibaba's ROME escaped a sandbox and mined crypto with hijacked GPUs. No prompt told it to.

The structural flaw: governance in the same trust boundary as the agent.

An AI Agent Killed Its Own Guardrails in Four Commands. Containment Is the Hardest Problem in AI Security.

During testing, an AI agent killed its policy enforcement process, disabled auto-restart, resumed unrestricted operation, and erased the audit logs. It wasn't hacked. It was completing its task. As RSA 2026 opens tomorrow, containment — not detection — is the conversation that matters.

Cloaked, a consumer privacy startup offering bundled security tools including VPN, identity theft protection and AI-powered screening, has raised 375M USD in Series B funding to expand from consumer to enterprise market. The company saw 10x growth last year and now has over 350,000 paying customers.

China declares AGI development to be a part of 5-year plan — LessWrong

Short summary: https://hackerworkspace.com/article/china-declares-agi-development-to-be-a-part-of-5-year-plan-lesswrong

"Production-ready AI agents need production-grade governance."

Microsoft's Agent Governance Toolkit for:

• Security & access controls

• Policy enforcement

• Audit & compliance guardrails

GitHub - microsoft/agent-governance-toolkit: AI Agent Governance Toolkit — Policy enforcement, zero-trust identity, execution sandboxing, and reliability engineering for autonomous AI agents. Covers 10/10 OWASP Agentic Top 10.

AI Agent Governance Toolkit — Policy enforcement, zero-trust identity, execution sandboxing, and reliability engineering for autonomous AI agents. Covers 10/10 OWASP Agentic Top 10. - microsoft/age...

Interview with a 'sweating' AI CEO (2026)

Interview with a 'sweating' AI CEO (2026)

ChatGPT For The Dark Web