Looking for an arXiv endorsement in cs.CR (Cryptography and Security).

I've published a research paper on evolutionary, AI red-teaming - genetic algorithms that breed adversarial prompts to bypass LLM guardrails.

Paper: https://doi.org/10.5281/zenodo.18909538

GitHub: https://github.com/regaan/basilisk

If you're an arXiv endorser in cs.CR or cs.AI

and find the work credible, I'd genuinely

appreciate an endorsement.

Basilisk: An Evolutionary AI Red-Teaming Framework for Systematic Security Evaluation of Large Language Models

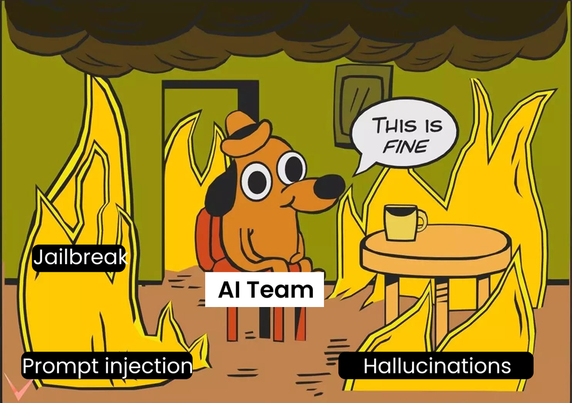

The rapid deployment of large language models (LLMs) in production environments has introduced a new class of security vulnerabilities that traditional software testing methodologies are ill-equipped to address. I present Basilisk, an open-source AI red-teaming framework that applies evolutionary computation to the systematic discovery of adversarial vulnerabilities in LLMs. At its core, Basilisk introduces Smart Prompt Evolution (SPE-NL), a genetic algorithm that treats adversarial prompts as organisms subject to selection pressure, enabling the automated generation of novel attack variants that evade static guardrails. The framework covers 29 attack modules mapped to 8 categories of the OWASP LLM Top 10, supports differential testing across 100+ providers via a unified abstraction layer, and provides non-destructive guardrail posture assessment suitable for production environments. Basilisk produces audit-trails with cryptographic chain integrity and generates reports in five formats including SARIF 2.1.0 for integration with developer security workflows. Empirical evaluation demonstrates that evolutionary prompt mutation achieves a 92% relative improvement in attack success rate over static payload libraries. Basilisk is available as a Python package (pip install basilisk-ai), Docker image, desktop application, and GitHub Action for CI/CD integration.