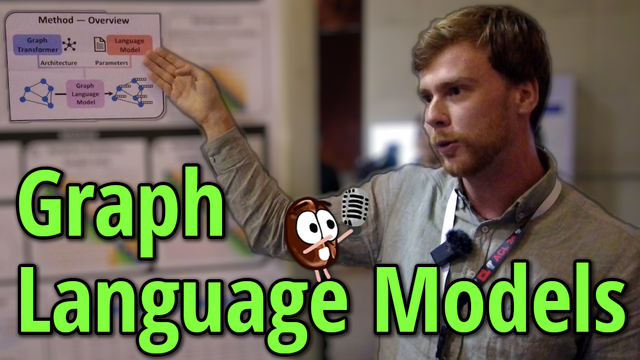

How to make powerful LLMs understand graphs and their structure?🕸️ With Graph Language Models!

They take a pre-trained LLM and fit it with the ability to process graphs. Watch if you're curious!👇

📺 https://youtu.be/JcHeaONGbmQ

(Hint: it's about position embeddings, as the author explained at #ACL2024 🔴)