| Homepage | https://briemadu.github.io/ |

B. Madureira

- 21 Followers

- 53 Following

- 6 Posts

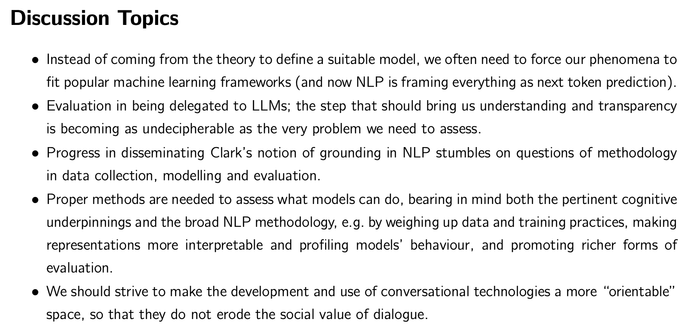

You'll find Brie's amazing work on "incrementally enriching the common ground" at your favourite supplier of open science (the ACL anthology), but if you're at all interested in the past, present, and future of computational modelling of conversational grounding, do also read the fantastic "integrative overview" she wrote.

publish.UP Incrementally enriching the common ground

When humans engage in a conversation, many cognitive, linguistic and social forces and constraints are at play simultaneously. In particular, each utterance incrementally enriches the participants' common ground by making new bits of information accumulate into their shared set of knowledge, experiences, suppositions, beliefs and memories. To make it happen, they must understand each other, keep track of what has been shared, and collaborate to solve misunderstandings. Modelling this grounding process is evidently very challenging, but also vital both to shed light on how human dialogue works and to build applications that can safely interact with humans. The field of natural language processing has seen advancements with data-driven, end-to-end deep learning models, but this prevailing paradigm also has conceptual frailties when it comes to processing dialogue phenomena: in vogue encoders are by design not fully incremental, models are not trained with an explicit grounding signal, hidden representations are not directly interpretable and static datasets abstract away many aspects of interactivity. In this thesis, I propose methods to evaluate the grounding competence of deep learning dialogue models from three perspectives: (i) incremental understanding with timing and revisions; (ii) making information shared and processing the conversation history while considering the interlocutor's perspective; and (iii) requesting clarification while taking actions and dealing with uncertainty in collaborative settings. With a reflective summary of my publications on these three themes, I argue that cognitively motivated evaluation is an effective and useful approach to appreciate what current dialogue models, including chat-optimised large language models, can do, while standing on firm grounds about their limitations and, most importantly, the ethical concerns raised due to their development and use.

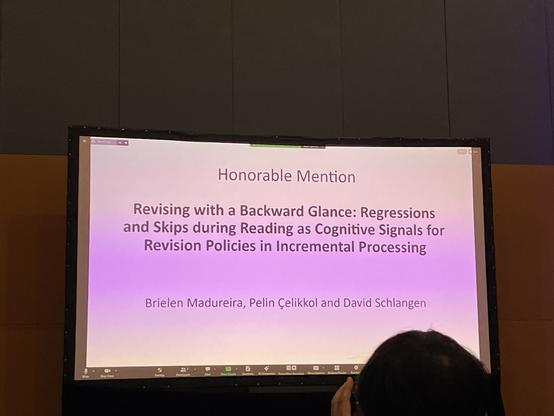

As a nice surprise, this paper ended up getting an “honourable mention” at #CONLL at #EMNLP2023. Great work by first authors @briemadu and Pelin!

Revising with a Backward Glance: Regressions and Skips during Reading as Cognitive Signals for Revision Policies in Incremental Processing

In NLP, incremental processors produce output in instalments, based on incoming prefixes of the linguistic input. Some tokens trigger revisions, causing edits to the output hypothesis, but little is known about why models revise when they revise. A policy that detects the time steps where revisions should happen can improve efficiency. Still, retrieving a suitable signal to train a revision policy is an open problem, since it is not naturally available in datasets. In this work, we investigate the appropriateness of regressions and skips in human reading eye-tracking data as signals to inform revision policies in incremental sequence labelling. Using generalised mixed-effects models, we find that the probability of regressions and skips by humans can potentially serve as useful predictors for revisions in BiLSTMs and Transformer models, with consistent results for various languages.

Revising with a Backward Glance: Regressions and Skips during Reading as Cognitive Signals for Revision Policies in Incremental Processing

Brielen Madureira, Pelin Çelikkol, David Schlangen

https://arXiv.org/abs/2310.18229 https://arXiv.org/pdf/2310.18229

Revising with a Backward Glance: Regressions and Skips during Reading as Cognitive Signals for Revision Policies in Incremental Processing

In NLP, incremental processors produce output in instalments, based on incoming prefixes of the linguistic input. Some tokens trigger revisions, causing edits to the output hypothesis, but little is known about why models revise when they revise. A policy that detects the time steps where revisions should happen can improve efficiency. Still, retrieving a suitable signal to train a revision policy is an open problem, since it is not naturally available in datasets. In this work, we investigate the appropriateness of regressions and skips in human reading eye-tracking data as signals to inform revision policies in incremental sequence labelling. Using generalised mixed-effects models, we find that the probability of regressions and skips by humans can potentially serve as useful predictors for revisions in BiLSTMs and Transformer models, with consistent results for various languages.