The AI agent skills conversation focuses mainly on text. But for many tasks, words fall short. We need visual skills: providing images and videos as context, not just text.

It's one thing to describe to a robot how to fold a t-shirt. It's another to show it a video.

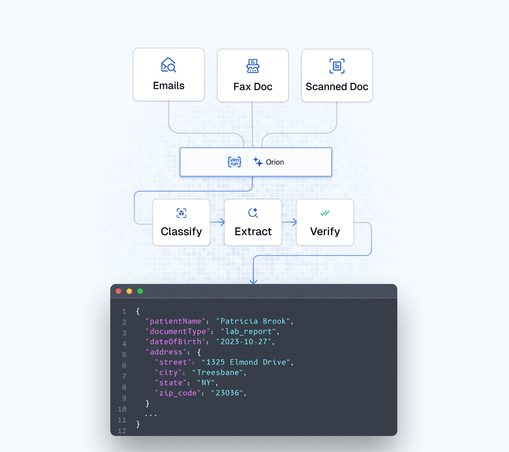

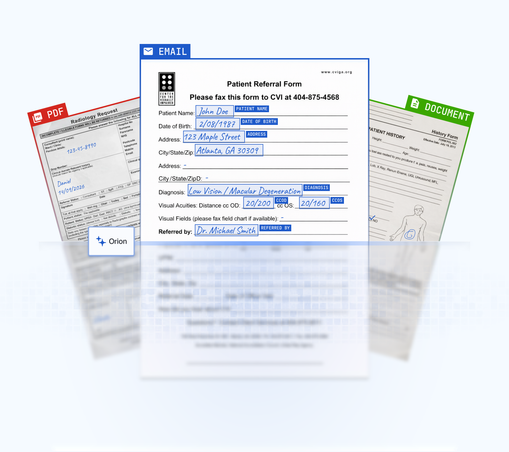

At VLM Run, that's what we're building: visual agents that understand visual data and act on it.