| Web | https://www.tkuhn.org/ |

| ORCID | https://orcid.org/0000-0002-1267-0234 |

| Work | https://knowledgepixels.com/ |

| https://www.linkedin.com/in/tkuhn/ |

Tobias Kuhn

- 216 Followers

- 361 Following

- 164 Posts

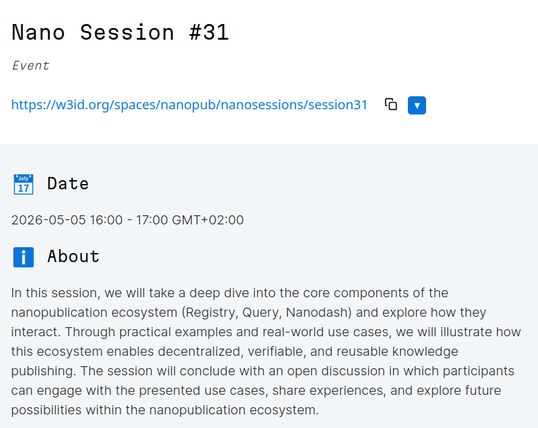

This is just a quick reminder that we'll have our next Nano Session (#31) tomorrow! https://w3id.org/spaces/nanopub/nanosessions/session31

If you plan to attend, visit the link above and press "+" next to "Planned attendants" to announce it, if you like :)

We will also have a segment specifically for folks at the Knowledge Graph Conference (https://www.knowledgegraph.tech/). This segment is 1.5 hours earlier at 14:30 CEST / 8:30 EDT, and everyone is welcome to join! https://w3id.org/spaces/nanopub/nanosessions/session31/kgc-segment

I hope to you see you tomorrow! :)

Nano Session #31 is happening next week!

🗓️ Tuesday, May 5

🕓 16:00 CEST (UTC+2)

💻 https://meet.jit.si/nanosession

Deep dive into the nanopublication ecosystem (Registry, Query, Nanodash) and how they interact, with real-world use cases.

Join the discussion and share your experiences!

Don’t forget to indicate your planned participation here:

https://nanodash.knowledgepixels.com/space?id=https://w3id.org/spaces/nanopub/nanosessions/session31

Around five years after I first started working on this document about the vision of a Knowledge Space, I am finally ready to call this version 1.0! 😁 Here it is: https://w3id.org/knowledge-space/

And it's now actually more than just a vision, as all the main parts have been implemented (see "Current Implementations"). I'm always curious to hear feedback, and there will be follow-ups on the implementation side.

RE: https://fediscience.org/@snakemake/116443839004108636

Now, folks, this is my first release of a Snakemake reporter plugin!

I am pretty proud of it, but it would have been impossible without the input of @johanneskoester , @tkuhn and - most of all - @fbartusch !

What is it about?

Have you ever read a #bioinformatics paper and thought: "Hugh? How did they get there? This is absolutely not #reproducibleComputing , because I cannot apply it!"

The Snakemake Workflow Management System helps researchers by generating a self-contained HTML report with all bells and whistles (all software metadata, runtime stats and publication-ready figures). And yet, I felt it is time to easy writing the materials & methods section.

Declare a worfklow #nanopub using this template: https://w3id.org/np/RAOT7z3RA0XYlHIikne8rfUUYZrtHyrzXBD1HpI_GvcRk and get a declaration like this: https://w3id.org/np/RAjHDlPDghZzc9ZvQ3uJQNJ9Jd_KAYzZt7dk5PXKgjRyE

Use this reporter plugin and get all metadata reference in one other nano publication: https://w3id.org/np/RAK9xz_ccnu0Xhs4vX2KtqCxX44mmSt6nq-ePLeewMrFE

1/3

🚀 nanopub-elements v0.1.0 is out!

We released nanopub-elements: a Web Components library to display query results and individual nanopubs easily on any webpage.

📦 npm: https://www.npmjs.com/package/@nanopub/nanopub-elements

Documentation, feedback, and issues: https://github.com/nanopublication/nanopub-elements

RE: https://mas.to/@nanopub/116381014075986125

Nano Session #30 is happening today!

Try out Nanodash to make your own personal profile page in four simple steps:

1. Go to https://nanodash.knowledgepixels.com and login with ORCID (top right)

2. Publish intro nanopublication as instructed, to establish your identity in nanopub world

3. Visit an existing profile and choose "add to my own profile" in the dropdown menu for a view you'd like on yours too

4. Depending on the view you have added, you can use the "add..." button to add entries.

And that's it! :) Happy to hear any feedback.

Our next Nano Session #30 is coming up soon!

🗓️ Tuesday, April 14

🕓 16:00 CEST (UTC+2)

💻 Zoom: https://vu-live.zoom.us/j/91057846609?pwd=ON5eF2Ovs1fY8KQUCnEvSM2Eltfod1.1

This session will feature two presentations:

• Mitja Zdouc: *Natural Product Research Data: from one star to five stars*

https://zdouc-lab.github.io/zdouc-lab-website/

• Roberto Pizzolotto: *Biodiversity structured information, from species list to network through nanopublications*

Don’t forget to indicate your planned participation here:

https://nanodash.knowledgepixels.com/space?id=https://w3id.org/spaces/nanopub/nanosessions/session30

See you there!

if you want to create nanopublications for recording citation intents (you authenticate with your ORCID), you can use either of these two @nanopub templates:

1. https://nanodash.knowledgepixels.com/explore?id=RAX_4tWTyjFpO6nz63s14ucuejd64t2mK3IBlkwZ7jjLo

2. https://nanodash.knowledgepixels.com/explore?id=https://w3id.org/np/RA43F9EoOuzF0xoNUnCMNyFsfIqlsuWDdPHCnN0wCdCAw&label=RA43F9EoOu&forward-to-part=true