Steve Blackburn

- 228 Followers

- 76 Following

- 57 Posts

@wingo

This is reminiscent of the GC strategy we developed in the late 1990s for the JOVE native code Jave complier (https://wirfs-brock.com/allen/things/jove).

It was described in a Lightening Talk I presented at ISMM 2000 https://www.wirfs-brock.com/allen/files/jove/joveismm00.pdf

JOVE

JOVE was an whole-program optimizing native code compiler for Java development by Instantiations Inc between 1998 and 2002. I was the overall architect and team leader of the development. JOVE compiled programs from Java bytecodes into native Windows x86 exe file. It applied very aggressive optim

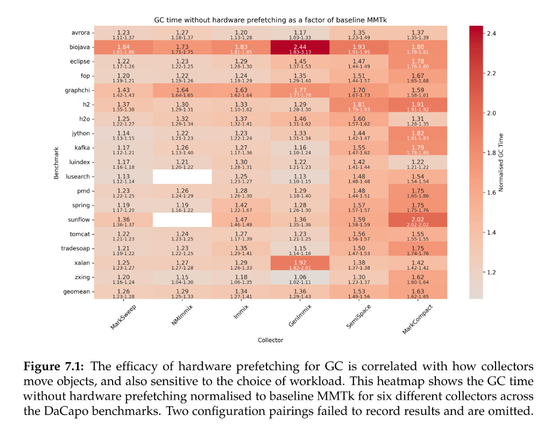

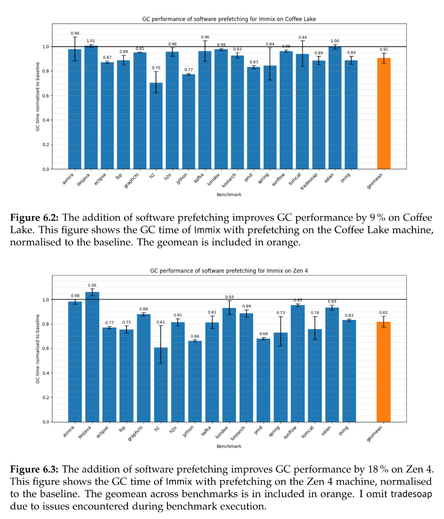

Lots of fun details in Huang's thesis: static vs dynamic prefetching (static is fine), computation of how much one could gain if cache-miss latency were eliminated, what the GC time would be if hardware prefetchers were disabled (20-80% slower; see attached figure); mutator time without prefetchers (sometimes it's better??!?); how to use "perf mem"; all good stuff!

I don't know what's in the water at ANU but they have been doing lots of great work at all levels recently

Claire Huang wrote an undergraduate honor's thesis, supervised by @steveblackburn and @caizixian https://www.steveblackburn.org/pubs/theses/huang-2025.pdf

She uses sampling PEBS counters and data linear addressing (DLA) on Intel chips to attempt to understand the structure and attribution of load latencies in MMTk.

After identifying L1 misses in the trace loop as a significant overhead, she adds prefetching and reduces GC time by 10% or so across a range of benchmarks, and more on Zen4.

@mgaudet I'm glad I could help.

Following up on the start of this thread on microarchitectural performance debugging, you might find Claire Huang's undergraduate thesis interesting (co-supervised by @steveblackburn). She took a very detailed look at GC in terms of cache behaviors, and measured optimization headroom. https://www.steveblackburn.org/pubs/theses/huang-2025.pdf

She also previously led the eBPF tracing in GC work (MPLR'23).

PLDI SRC submission deadline is in 2 days! https://pldi23.sigplan.org/track/pldi-2023-src

PLDI SRC has a two-track model that supports both in-person and remote presentations.

This year's PLDI is also part of FCRC (https://fcrc.acm.org/) which contains many different and interesting CS conferences.

PLDI 2023 - Student Research Competition - PLDI 2023

PLDI echoes FCRC’s statement regarding recent legislation in Florida. Anyone, from any background, should feel encouraged to participate and contribute to PLDI. Differences – in age, race, gender and sexual orientation, nationality, physical ability, thinking style and experience – bring richness to our efforts in providing quality programs and services for the global computing community. Thus, PLDI is committed to creating an environment that welcomes new ideas and perspectives, and where hostility or other antisocial behaviors are not tolerated. Welcome to the home page of the 44th AC ...