My biggest surprise at #defcon33 : in a head-to-head LiveCTF match, one player’s AI bot beat _both_ humans to the punch.

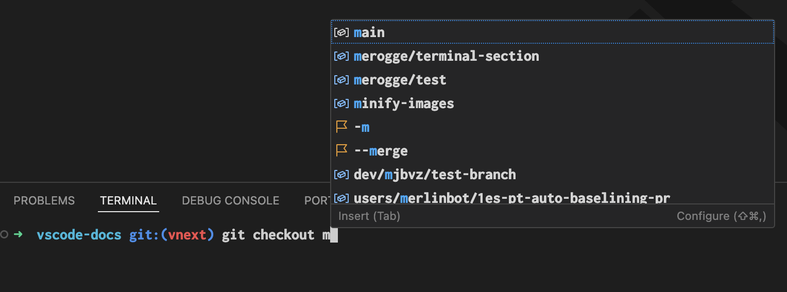

I was commentating the match & was super confused because I could see the player had only just begun their solve script: https://www.youtube.com/live/TYn38VfmDRU?si=GLDRin_TN7naMl4Z&t=15180

The player had the bot running in the background and didn’t notice it submitted a correct solution.

The craziest part: the bot solved at least two other challenges faster than the player.

This player ended up winning the whole thing, clinching the finals without the bot's help.

Granted:

- LiveCTF challenges are designed to be “easy” for top CTF players, solved in 10-30 minutes

- LiveCTF’s format is straightforward and didn’t change since last year, and thus easily automated

- The bot was built by a world-class CTF player w/ experience building AI tools

But:

- These were non-trivial challenges that required synthesis of multiple concepts (PNG format, internal structure offsets, shellcode)

- The player provided almost no input at all, other than the challenge binary and presumably info on the LiveCTF format & challenge category

As the organizers of LiveCTF, we allowed for this possibility as an open challenge, but we were all surprised by this.

Perhaps a small turning point, but it marks a change in #CTF. Whether by policy or technical solutions, organizers will need to handle AI solvers.