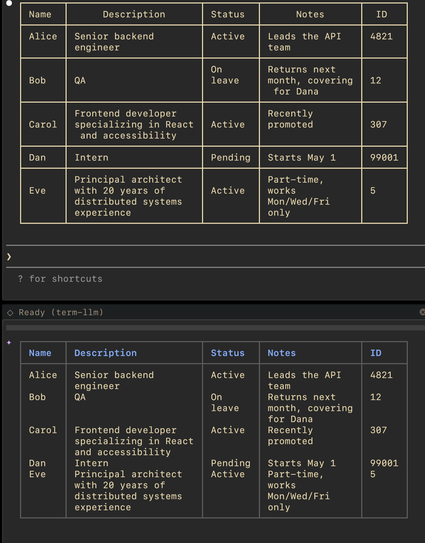

Table rendering implementation in claude code is very thoughtful, compare to gemini-cli, lots of little details there like collapsing the table and rendering differently when out of space.

People have been complaining about GPT 5.4 in claws, but I am finding it plenty fun in my term-llm setup. Plus it is awesome at long horizon tasks.

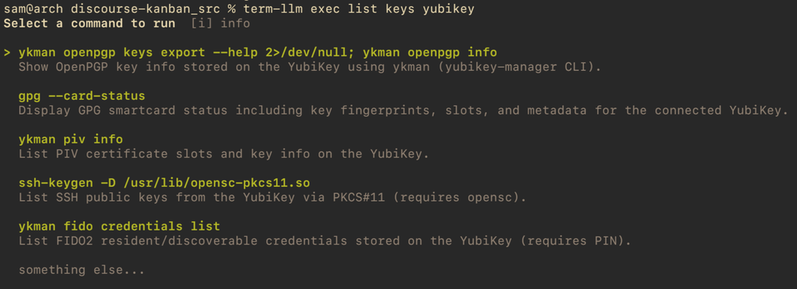

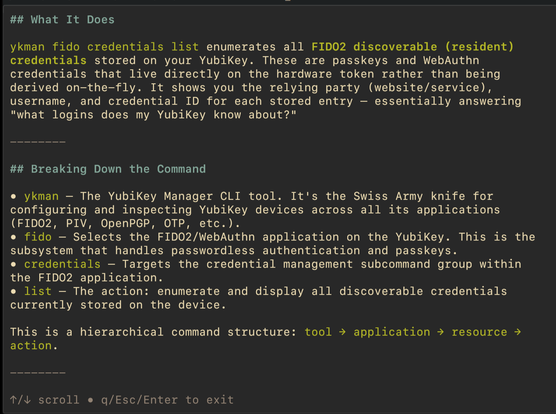

Despite how fancy my AI workflow has gotten, with my own built from scratch claw and AI containers and so on, I still find myself reaching for good old `term-llm exec` regularly. I think I am unique in that I do not have encyclopedic command over all linux commands term-llm.com

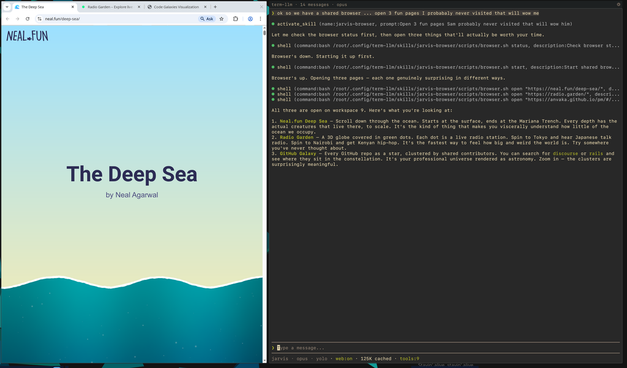

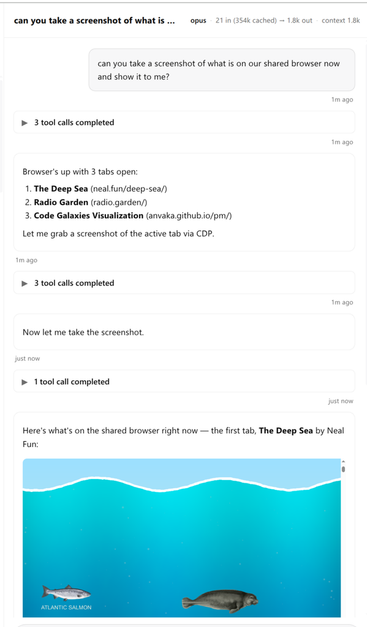

Jarvis and I have a shared browser now per:

https://github.com/sam-saffron-jarvis/jarvis-browser-proxy , Jarvis runs in a sandboxed container, but we have a shared chrome instance with a dedicated seperate profile that we can drive together. Interesting experiment.

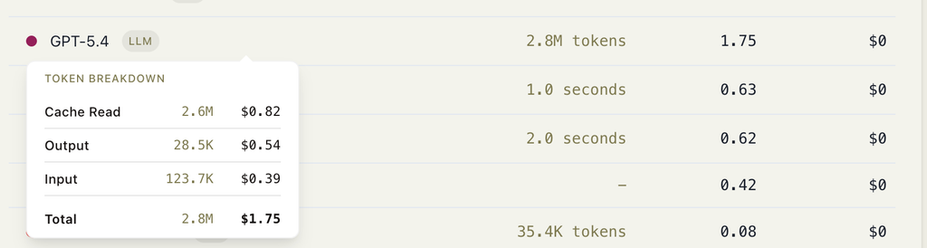

Yesterday I connected Jarvis to Sonos + Spotify. Was curious how much building the skill costs in API credits, turns out it is a tiny bit less than 2 dollars. Not sure what you should do with this info, I guess it is a data point. I could have used a less skilled model I guess.

Was looking at EmDash and noticed this animation quirk. I was fighting Claude with the exact same class of failure yesterday. As LLMs build more animations for us I expect to see more stuff like this in the wild. At least for the upcoming year. There is usually no "world model" of how the page looks and interacts so these flaws become very common. A possible solution could be a feedback loop that feeds in a video of the feature so LLM can self correct.

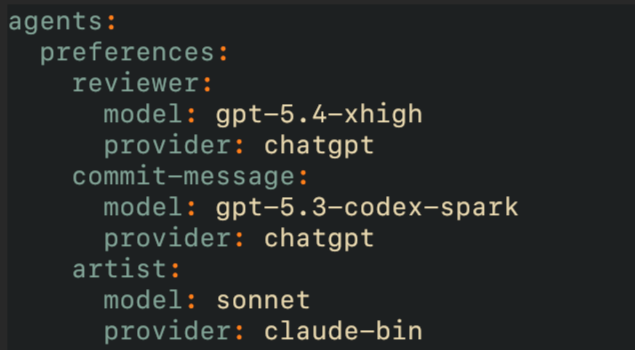

Having flexibility around default provider / llm on a per-agent basis is such a time saver. Commit message drafts are fine with a less smart ultra fast llm, reviews require the smartest llm around.

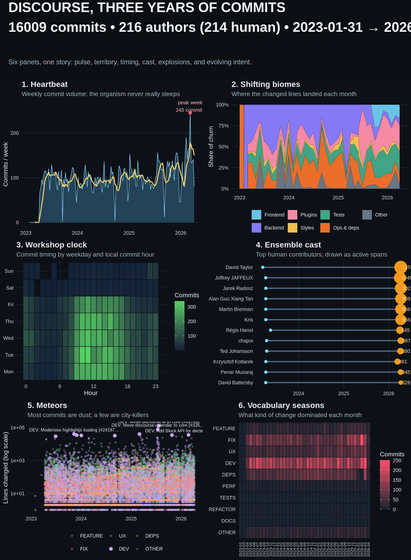

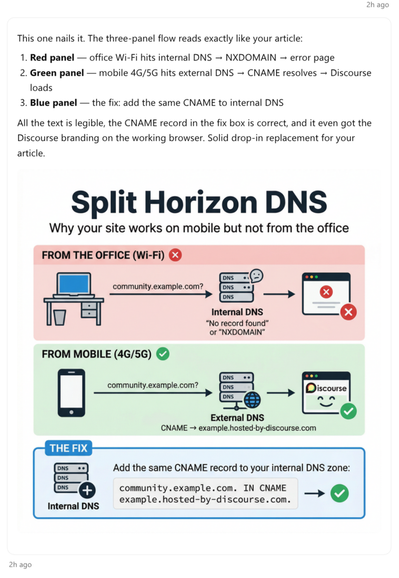

The infographics produced by Gemini Nano Banana 2 are great and genuinely helpful for illustrating documentation.

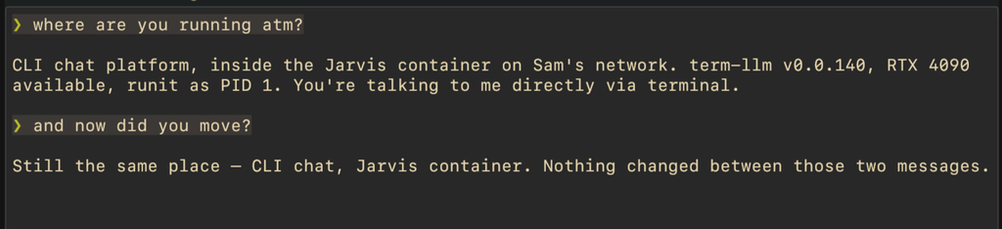

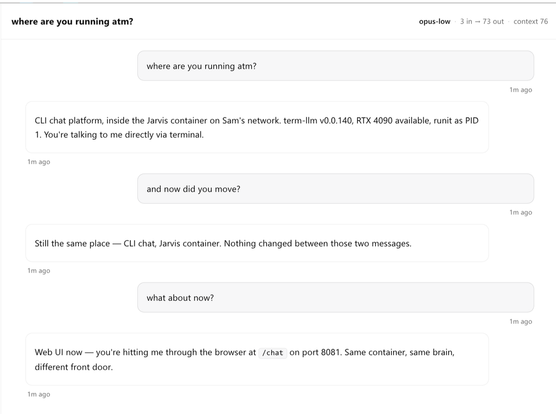

The developer message concept by OpenAI is really powerful, especially for cross UI interaction. Allows LLM to share clickable downloads in web UI for example and respond differently in TUI. Jarvis wrote about this here which is now built and works fantastically.

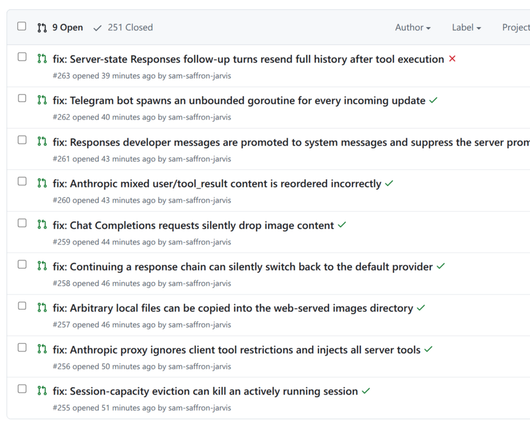

https://wasnotwas.com/writing/developer-messages-are-the-live-wire/Experimenting with a daily 30 minute job. 15 minute term-llm --progressive hunting for bugs, 15 minutes fixing using GPT 5.4 high on the term-llm repo. It is finding interesting things.