Robert Peharz

- 35 Followers

- 52 Following

- 14 Posts

Assistant prof at TU Graz, artificial intelligence, machine learning. Former assistant prof at TU Eindhoven and Marie-Curie Fellow at Machine Learning Group Cambridge.

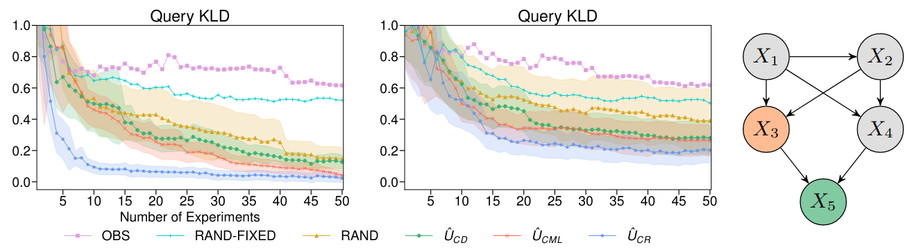

The implementation scales to several dozens of variables and indeed learns target causal queries faster than competing methods.

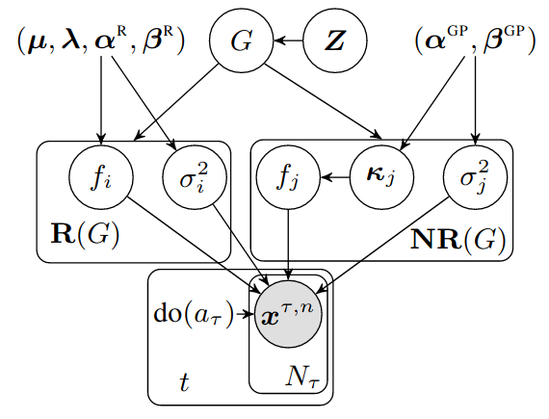

We have developed a tractable implementation for non-linear additive Gaussian noise models, using a DIBs prior over causal graphs and Gaussian processes for the causal mechanisms.

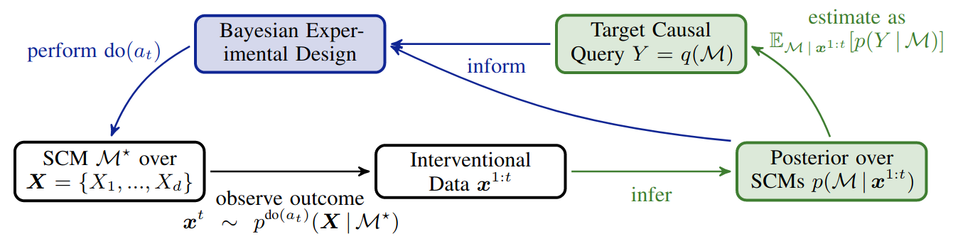

Advantage 4 is a combination of Advantage 2 and 3: we can get active about causal queries of interest, by maximizing Information Gain of the query posterior! Thus, even if we are still uncertain about the model, we might already be pretty certain about the target causal query!

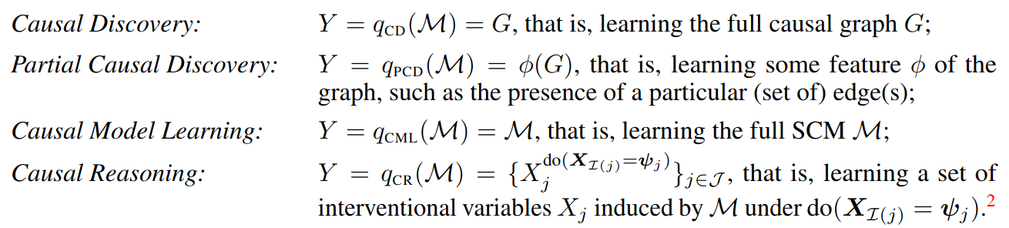

Advantage 2. This uncertainty can be used for causal predictions using Bayes predictive distributions. Specifically, we define the "causal query function" q, which represents what we want to know from the model (the whole graph, particular edges, some causal effect, etc).