riyer

- 147 Followers

- 44 Following

- 36 Posts

Data Scientist & Moral Psychologist. Now @ http://PsychOfTech.org + USC Neely Center. Former civic integrity/AI/Newsfeed @ Facebook, Cofounder @ Ranker/CivilPolitics.

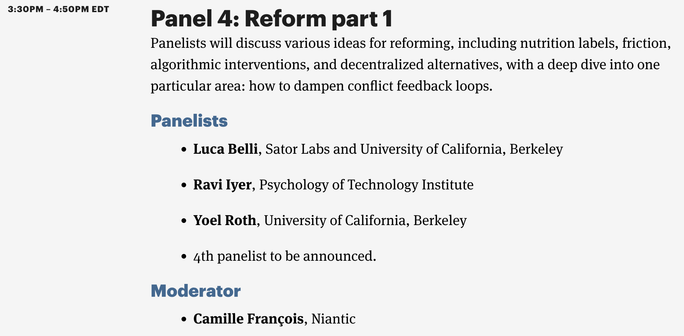

Join us on April 28-29 for "Optimizing for What? Algorithmic Amplification and Society" – an event curated w/ @randomwalker exploring how online amplification works, what can be done to mitigate the harms and take advantage of the benefits. RSVP: https://www.eventbrite.com/e/optimizing-for-what-algorithmic-amplification-and-society-tickets-558764247907

@chris +1 to all these platforms being activitypub compatible. I'm not sure if Mastodon will reach critical mass or be good enough to replace Twitter. But I would bet that all these systems combined could make something with escape velocity. Project Narwhal might be an interesting fork as well: https://www.thenarwhalproject.com/

Reddit just opened their call for Data Science interns this summer, with teams recruiting for MS and PhD students. I'm specifically looking for a PhD student to work with me on Community -- check out this Reddit post to learn more!

https://www.reddit.com/r/CompSocial/comments/109am3a/love_reddit_why_dont_you_intern_there_call_for/

To build on this work showing how deprecating engagement incentives for sensitive topics improves outcomes for users and society, I'm hopeful we can 1) Audit algorithms across platforms for perverse engagement incentives (see this post for more on how: https://psychoftech.substack.com/p/defining-meaningful-algorithmic-transparency), 2) Align on a societal definition of sensitive content - This work relies on a common definition of what content we feel should or should not be optimized for engagement. Ideally, these lines are drawn by the world, not by private companies, and 3) Decide on alternative incentives for important topics - There are kinds of engagement that likely are more aligned with user value (e.g. explicit positive reactions from diverse audiences) and we should study those as potential alternatives.

Following up on @Jhorwitzz's excellent article about FB's efforts to change its relationship to political content, I wrote this follow-up on what we can learn from this work. https://psychoftech.substack.com/p/when-should-companies-optimize-for

@ernie now hopefully folks will build on the content to keep making better and better products

Great answer from

ex-coworker of mine Glenn Ellingson to “If you could wave a magic wand and fix one issue in the integrity work space, what would it be?” Answer: "convince the core product/growth team to measure themselves on value created (and lost) for the community of users, not short-term engagement. Even known-flawed measures like net promoter score (NPS) seem more likely to build lasting product value than counting likes & comments" Worth reading the full article @ https://www.everythinginmoderation.co/glenn-ellingson-meta-content-harms/

ex-coworker of mine Glenn Ellingson to “If you could wave a magic wand and fix one issue in the integrity work space, what would it be?” Answer: "convince the core product/growth team to measure themselves on value created (and lost) for the community of users, not short-term engagement. Even known-flawed measures like net promoter score (NPS) seem more likely to build lasting product value than counting likes & comments" Worth reading the full article @ https://www.everythinginmoderation.co/glenn-ellingson-meta-content-harms/