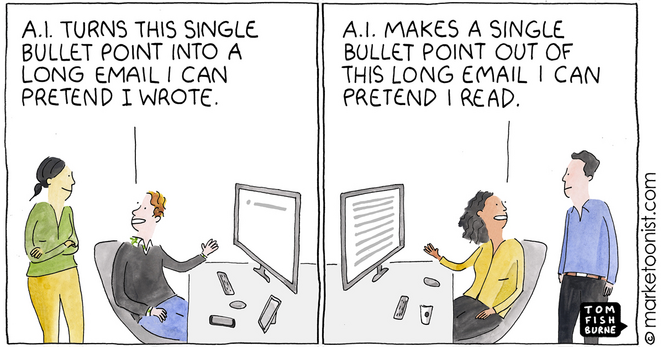

AI Written, AI Read cartoon - Marketoonist | Tom Fishburne

One piece of slang that has long embodied the short attention span Internet age is TL;DR, short for “too long; didn’t read.” With the explosion of generative AI tools, we’re rapidly entering the age of TL;DW: “too long, didn’t write.” A January survey from Fishbowl found that 40% of nearly 12,000 workers have used ChatGPT