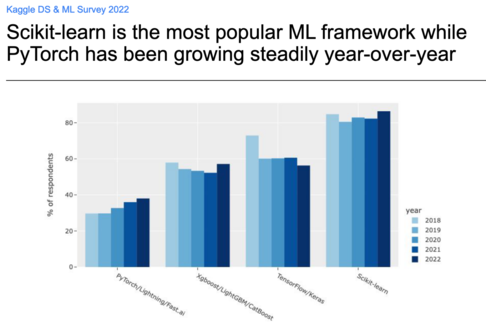

[ANN]: to avoid typo-squatters on PyPI we uploaded a magical "sklearn" package with a single dependency to the actual "scikit-learn" years ago.

However many people are confused by this magic package alias and its fixed 0.0 version number.

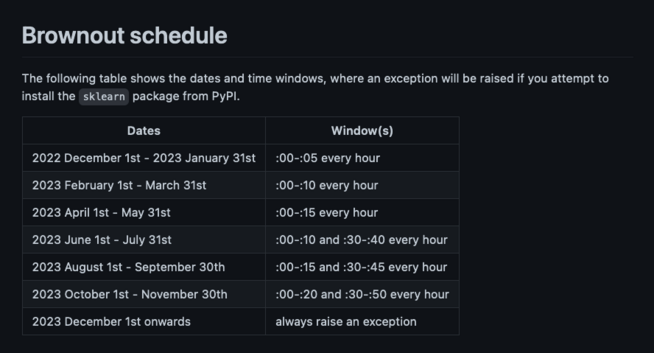

Next month, this alias will progressively "brown-out": "pip install sklearn" will fail at some predictable times with an informative error message to tell the user to use "pip install scikit-learn" instead.

https://github.com/scikit-learn/sklearn-pypi-package#goal