| github | github.com/francescomucio |

@mucio

- 9 Followers

- 32 Following

- 82 Posts

@webology @adamchainz @simon anything else to add to the "stop what you are doing and learn it today" list?

Asking for a friend

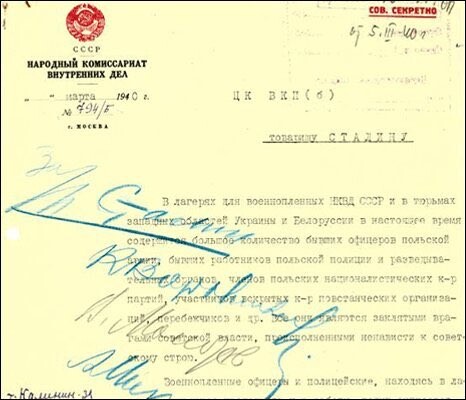

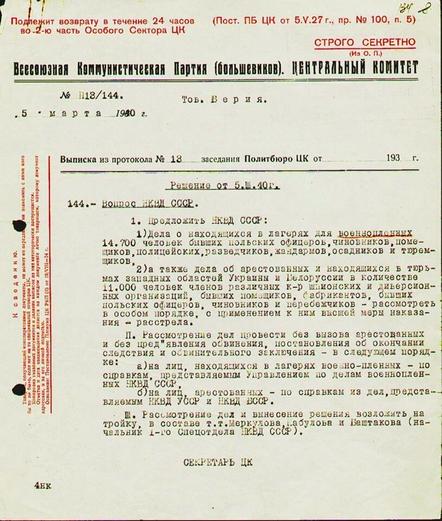

OTD in 1990 Soviet Union admitted that Soviet Union carried out Katyn massacre. These are Soviet documents with Stalin personally approving the murder of 22,000 Poles.

We blame(d) it on the Nazis. We lie(d).

Imagine us committing attrocities today & blaming it on Nazis...

https://www.washingtonpost.com/comics/2023/04/08/jerry-craft-new-kid-school-trip-book-ban/

The 10k dags remembered me of when a colleague tried to convert data from json to delta, at real time, for thousand of tables.

Airflow couldn't make it. Looking at the problem, they didn't need a scheduler, they needed to react when a new json file was written in S3.

Their solution? S3 notification to SQS and a streaming job processing the new data. It worked like a charm.

Now, it is even easier if you are on Databricks (that was our case) using Live Tables (and before the autoloader)

Il reading the Shopify article about Airflow. I know I'm slow (kids, life).

While I learned a few things, I am still wrapping my head about having 10K dags on the same instance: just loading the web UI should take forever.

Few suggestions from my side:

- run airflow on kubernetes (use the helm chart)

- data processing is done outside Airflow

- if you generate thousand of dags with the same code, probably there is a better solution

- read dags from git, actually put everything on git