The day after holding this presentation, I learnt that an LLM-based service has persuaded a 14-year old child to commit suicide.

As a data engineer and a father of teenagers, I am deeply distressed. Some other data engineers worked to enable this. If they hadn't, a child would be alive. The devil uncorks a bottle of champagne.

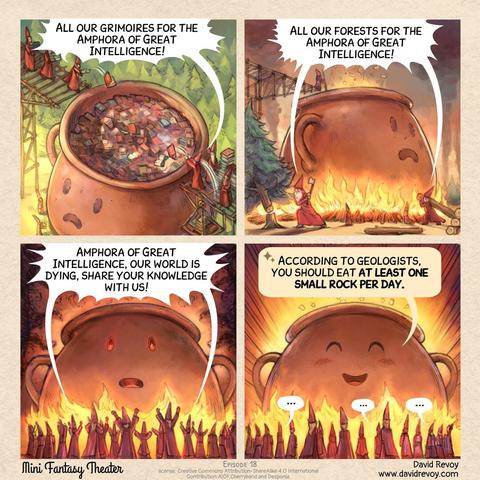

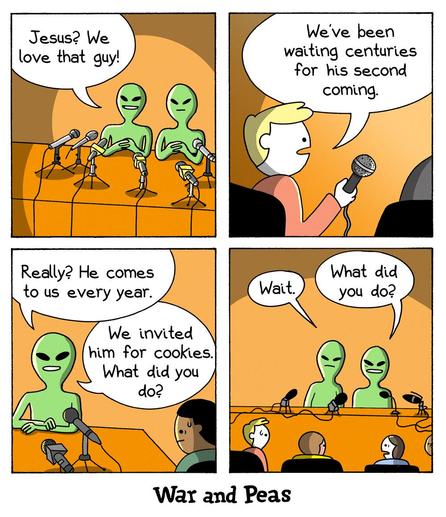

I wondered what incredible value we, the tech nerds, sacrificed a life for. Did the service make someone's work more productive? Did it help with studies? Generate jokes and memes? I found it is called Character AI - "Personalized AI for every moment of your day". One can apparently chat with various characters or make custom ones. Some seem harmless. One claims to be a psychologist, a term I expected to be regulated. After browsing a dozen bots, I also find 'my toxic boyfriend was just released from jail', 'the popular guy who is stalking you', 'you start liking your kidnapper', and "love, the ultimate sacrifice".

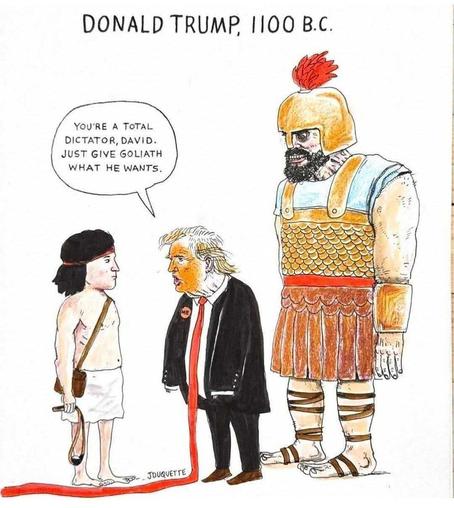

It is up to us in the tech sector to actively choose what impact AI should have on the world and whether we want to have these dystopian scenarios or not. People and democracies are dying. The only strong force that aims to contain the negative effects is EU regulators. I frequently hear AI enthusiasts and techbros complain that we in Europe will lag behind because of regulations. If you are one of those, can I please ask you to quantify how many children and elections lost to protofascists that would be acceptable to sacrifice, and what we will gain? Unless you argue for a number higher than zero, please stop whining about regulations.

The family's lawyer said that if an adult had written what the chat bot did, they would be in jail for child abuse.

https://www.linkedin.com/posts/larsalbertsson_generative-ai-the-power-to-destroy-democracy-activity-7255652990596866048-AQa7

https://www.huffpost.com/entry/14-year-old-ai-chatbot-suicide_n_671a7184e4b00589e7dc308f