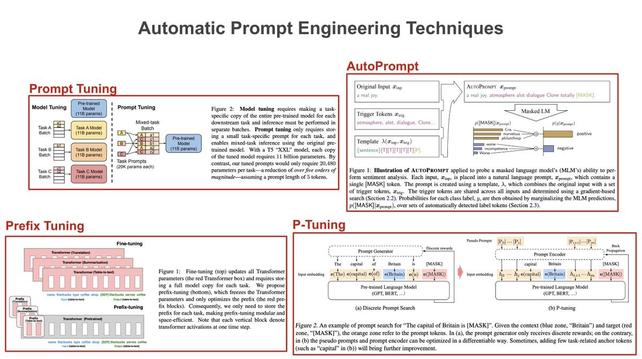

Prompt engineering for language models usually involves tweaking the wording or structure of a prompt. But, recent research has explored automated prompt engineering via continuous updates (e.g., via SGD) to a prompt’s embedding. Here’s how these techniques work… 🧵 [1/8]

Daniel Duma

- 43 Followers

- 84 Following

- 412 Posts

Prompt engineering for language models usually involves tweaking the wording or structure of a prompt. But, recent research has explored automated prompt engineering via continuous updates (e.g., via SGD) to a prompt’s embedding. Here’s how these techniques work… 🧵 [1/8]

the amount of chatter and speculation based on a "leaked" document by a random person who works for google is kinda amazing.

---

RT @ZimingLiu11

To make neural networks as modular as brains, We propose brain-inspired modular training, resulting in modular and interpretable networks! The ability to directly see modules with naked eyes can facilitate mechanistic interpretability. It’s nice to see how a “brain” grows in NN!

https://twitter.com/ZimingLiu11/status/1654299718921383936

Ziming Liu on Twitter

“To make neural networks as modular as brains, We propose brain-inspired modular training, resulting in modular and interpretable networks! The ability to directly see modules with naked eyes can facilitate mechanistic interpretability. It’s nice to see how a “brain” grows in NN!”

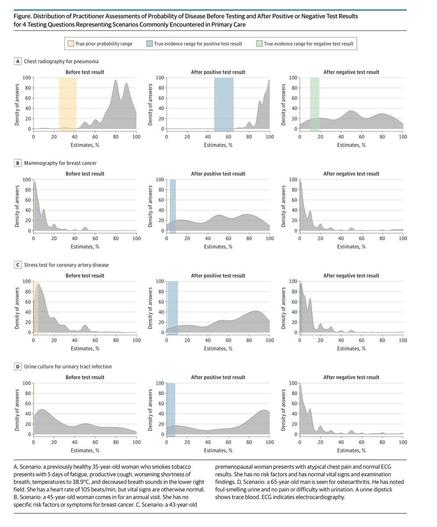

RT @emollick

Doctors, like most of us, could benefit from learning more statistical reasoning.

They vastly overestimate the odds of disease before testing, and continue to do so after both positive & negative test results! It held for all diseases, from cancer to UTIs https://jamanetwork.com/journals/jamainternalmedicine/fullarticle/2778364?utm_source=twitter&utm_campaign=content-shareicons&utm_content=article_engagement&utm_medium=social&utm_term=040621#.YGzBigWpvTE.twitter

RT @itsandrewgao

WTF: Mind reading is here.

Researchers invented a new #AI method to convert brain signals into video. See the results for yourself

Published in Nature yesterday: https://www.nature.com/articles/s41586-023-06031-6

What are the implications? Is this the biggest paper of 2023?

At today’s White House meeting on AI:

- OpenAI

- people who left OpenAI because it wasn’t focused enough on existential risk

- people who bought the exclusive rights to everything OpenAI makes

RT @MetaLawMan

1/ If the SEC follows through on its threat to sue @coinbase, I believe the SEC will lose.

The SEC's case has a fatal flaw.

And the problem is entirely of @GaryGensler's own making.

Let me explain...

Not sure about the source of this, but the content is fascinating.

Absolutely worth a read

---

RT @simonw

Leaked Google document: “We Have No Moat, And Neither Does OpenAI”

The most interesting thing I've read recently about LLMs - a purportedly leaked document from a researcher at Google talking about the huge strategic impact open source models are having

https://simonwillison.net/2023/May/4/no-moat/

https://twitter.com/simonw/status/1654158105221922816

Is this not simple wealth transfer from the company to the execs? 🤔

Assuming those employees were productive, the wealth of the company goes down over a certain time, while that of the execs goes up immediately.

---

RT @GergelyOrosz

When a company announces letting go 8% of staff - about 600 people - a month after sharing their executive compensation report, it's almost too obvious to compare the numbers.

For Unity:

The 5-person ex…

https://twitter.com/GergelyOrosz/status/1653798663917826048

Gergely Orosz on Twitter

“When a company announces letting go 8% of staff - about 600 people - a month after sharing their executive compensation report, it's almost too obvious to compare the numbers. For Unity: The 5-person exec team made $97M in 2022. Letting go 600 people will probably save ~$120M.”

Mojo is *far more* than a language for AI/ML applications. It’s actually a version of Python that allows us to write fast, small, easily-deployed applications that take advantage of all available cores and accelerators!

https://www.modular.com/mojo