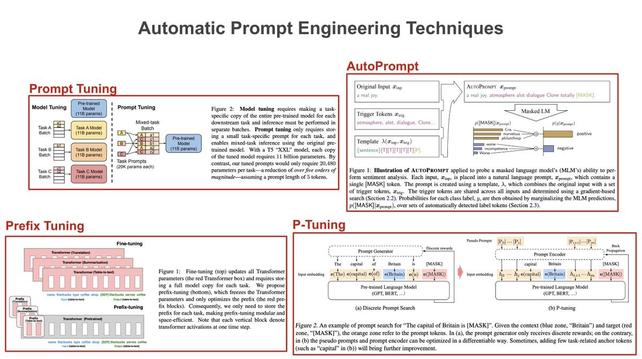

Prompt engineering for language models usually involves tweaking the wording or structure of a prompt. But, recent research has explored automated prompt engineering via continuous updates (e.g., via SGD) to a prompt’s embedding. Here’s how these techniques work… 🧵 [1/8]

Daniel Duma

- 43 Followers

- 84 Following

- 412 Posts

Prompt engineering for language models usually involves tweaking the wording or structure of a prompt. But, recent research has explored automated prompt engineering via continuous updates (e.g., via SGD) to a prompt’s embedding. Here’s how these techniques work… 🧵 [1/8]

---

RT @ZimingLiu11

To make neural networks as modular as brains, We propose brain-inspired modular training, resulting in modular and interpretable networks! The ability to directly see modules with naked eyes can facilitate mechanistic interpretability. It’s nice to see how a “brain” grows in NN!

https://twitter.com/ZimingLiu11/status/1654299718921383936

Ziming Liu on Twitter

“To make neural networks as modular as brains, We propose brain-inspired modular training, resulting in modular and interpretable networks! The ability to directly see modules with naked eyes can facilitate mechanistic interpretability. It’s nice to see how a “brain” grows in NN!”

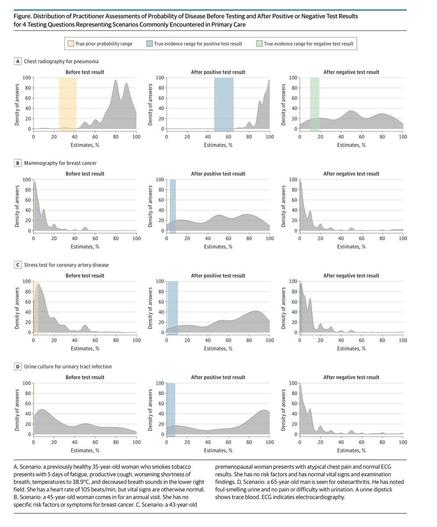

RT @emollick

Doctors, like most of us, could benefit from learning more statistical reasoning.

They vastly overestimate the odds of disease before testing, and continue to do so after both positive & negative test results! It held for all diseases, from cancer to UTIs https://jamanetwork.com/journals/jamainternalmedicine/fullarticle/2778364?utm_source=twitter&utm_campaign=content-shareicons&utm_content=article_engagement&utm_medium=social&utm_term=040621#.YGzBigWpvTE.twitter

RT @itsandrewgao

WTF: Mind reading is here.

Researchers invented a new #AI method to convert brain signals into video. See the results for yourself

Published in Nature yesterday: https://www.nature.com/articles/s41586-023-06031-6

What are the implications? Is this the biggest paper of 2023?

RT @KhoaVuUmn

"You can do this in R, and R is free!"

R:

How it started / How it’s going

RT @hwchase17

🚀Another way to supercharge retrieval on top of semantic search - reranking!

Just today @CohereAI released a brand new Rerank endpoint - here's how you can easily use it within a @LangChainAI retriever

Gist: https://gist.github.com/hwchase17/77ba7d4139d2ef6400991d7f10d2f894

RT @topofmlsafety

The Internal State of an LLM Knows When its Lying

Detects the truthfulness of LLM outputs by training a classifier on hidden layer activations, outperforming existing few-shot methods.

https://arxiv.org/abs/2304.13734

The Internal State of an LLM Knows When It's Lying

While Large Language Models (LLMs) have shown exceptional performance in various tasks, one of their most prominent drawbacks is generating inaccurate or false information with a confident tone. In this paper, we provide evidence that the LLM's internal state can be used to reveal the truthfulness of statements. This includes both statements provided to the LLM, and statements that the LLM itself generates. Our approach is to train a classifier that outputs the probability that a statement is truthful, based on the hidden layer activations of the LLM as it reads or generates the statement. Experiments demonstrate that given a set of test sentences, of which half are true and half false, our trained classifier achieves an average of 71\% to 83\% accuracy labeling which sentences are true versus false, depending on the LLM base model. Furthermore, we explore the relationship between our classifier's performance and approaches based on the probability assigned to the sentence by the LLM. We show that while LLM-assigned sentence probability is related to sentence truthfulness, this probability is also dependent on sentence length and the frequencies of words in the sentence, resulting in our trained classifier providing a more reliable approach to detecting truthfulness, highlighting its potential to enhance the reliability of LLM-generated content and its practical applicability in real-world scenarios.

Many different (text-based) transformer architectures exist, but when and where should we use them? Here’s a quick list of four important transformer variants and the best applications to use them for…🧵[1/7]