This talks about the evolution of the Slug font rendering algorithm, and it includes an exciting announcement: The patent has been dedicated to the public domain.

https://terathon.com/blog/decade-slug.html

Low-level systems stuff. Reverse engineering, security research, bit twiddling, optimisation, SIMD, uarch. 64-bit ARM enthusiast.

he/they

| Blog | https://dougallj.wordpress.com |

| http://twitter.com∕dougallj∕status∕1590357240443437057.ê.cc/twitter.html | |

| Github | https://github.com/dougallj |

| Cohost | https://cohost.org/dougall |

We released two tech talks today going over how to take advantage of the new architecture, features and associated developer tools.

Accelerate your machine learning workloads with the M5 and A19 GPUs

https://developer.apple.com/videos/play/tech-talks/111432/

https://www.youtube.com/watch?v=wgJX1HndGl0Boost

your graphics performance with the M5 and A19 GPUs

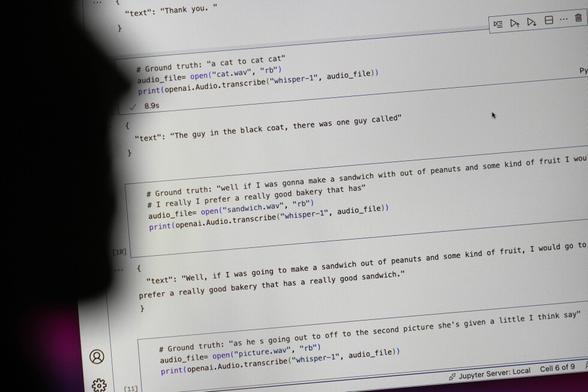

Whisper is a popular transcription tool powered by artificial intelligence, but it has a major flaw. It makes things up that were never said. Whisper was created by OpenAI. It's being used in many industries worldwide to translate and transcribe interviews, generate text in popular consumer technologies and create subtitles for videos. OpenAI has promoted Whisper as having near “human level robustness and accuracy." But more than a dozen computer scientists and software developers tell The Associated Press that isn’t always the case and that it's prone to making up chunks of text and even entire sentences. An OpenAI spokesperson says the company studies how to reduce that and updates its models incorporating feedback received.

@pervognsen @wolfpld Yeah, but the LLMs can do something that can't be done without them. They're good for indexing images for search, rather than replacing the original copies with something more compact. Or providing a guess at handwriting in historical documents with a human in the loop.

(I'm not sure what you're actually using OCR for, mostly I'm pasting text from screenshots - where I'd prefer Apple's OCR. It works very well and errors are unlikely to mislead me.)

@wolfpld @pervognsen Oh, and I see "SystemTracing" -> "SystemTraining" here. They're surprisingly hard to find by eye.

RE: https://mastodon.gamedev.place/@wolfpld/116088970554232592

@wolfpld @pervognsen For anyone reading along, the expected text is "Nie ma innych wątków!", and the test image and thread are here:

https://mastodon.social/@wolfpld@mastodon.gamedev.place/116088970688566418

@wolfpld @pervognsen DeepSeek-OCR 3B also hallucinated "llvm.pl.so.2", it was clearly the worst I tested.

Some counter-examples, which Opus tells me are wrong, but garbled:

* "Nie ma innego wątków!" (Qwen3-VL 8B)

* "Nie ma imnych wetków!" (MiniCPM-V 4.5)

Bigger models are obviously *way* better, but I suspect you would see similar failure modes on borderline-legible text.