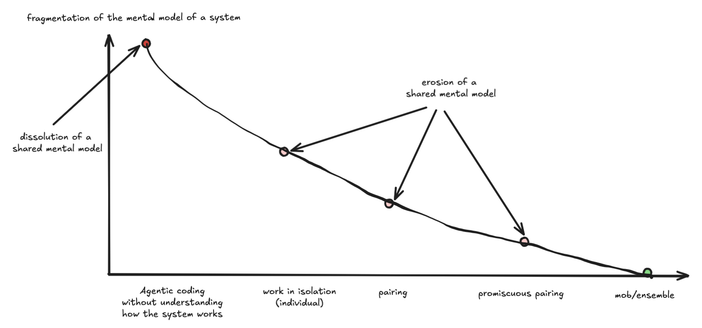

Preparing a talk "Agentic coding - Systems Perspective", and along the way realized that cognitive/comprehension debt didn't arrive with the advent of agentic coding. Most teams doing work in isolation (individually), already heavily experienced it.

The difference is that on this spectrum of fragmentation of the mental model of workings of the system we went from erosion to a complete dissolution of a shared mental model.

I also have new understanding of why teams doing pair/mob worked so well