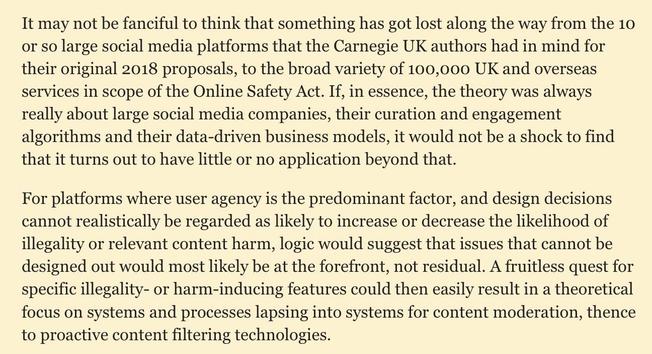

Been on a bit of a journey reading about Systems Thinking in the last few weeks. Why is that, you may ask? Well: point one, I wanted to understand the arguments for #SafetyByDesign as added to the UK #OnlineSafetyAct which says that services must be "safe by design".

| Blog | www.cyberleagle.com |