Been on a bit of a journey reading about Systems Thinking in the last few weeks. Why is that, you may ask? Well: point one, I wanted to understand the arguments for #SafetyByDesign as added to the UK #OnlineSafetyAct which says that services must be "safe by design".

@jim I could actually buy that as a sensible requirement if social media platforms were actually designed by very serious people to meet social and cultural needs. But as a former dot-com era developer, all I can say is LOL ROFL. Nobody has a clue it's going to be a service people depend on and safety is an issue until it's too damn late! Until then it's just a neat toy a couple of devs are noodling with in their spare time for their own use.

@cstross It is a tall order for so many reasons. Closed vs open systems; adversarial or opposed goals of the regulator (risk reduction) and the platform (attention); low alignment between legality of content and risk; context dependency changing the nature of content and risk

@cstross Still: if alignment between the users, community and platform is high, eg with Mastodon, then safety is much more realisable.

@jim As I keep yelling at people, the profitability of a business model does not confer legitimacy: that kind of thinking—very much a silicon valley thing—is highly problematic in commercial social media, which monetize our social connections.

@cstross Quite. So the question is, which things are inside the safety "system"? if the business model is outside, then really it is just tech acting against "potentially problematic" content and users.

@jim I'm tempted to suggest as a rule of thumb that true security in social media starts with banning for-profit companies from running social media. (Non-profits? Sure. Charities? Sure. But Mark Zuckerberg or Elon Musk? Absolutely not.)

@cstross @jim The “drive engagement via outrage to sell ads” thing is not new, the Daily Mail, everything from News Corp and various other low quality outlets have been doing the same for many decades. Now X, Facebook and the rest don’t even have to pay “journalists” to make stuff up, there are enough racist scum who happy to do that for free. Especially when the lies align with what the owners want in their heart of hearts.

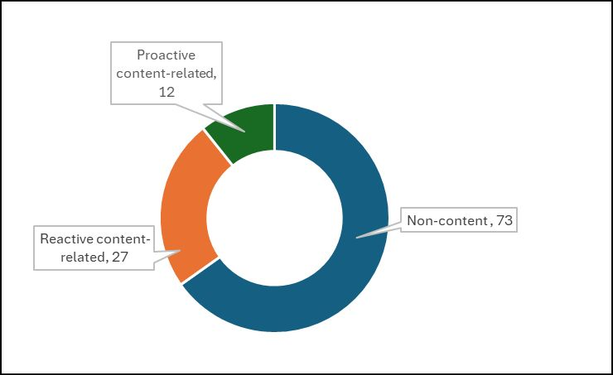

@bjn @cstross @jim Other than ‘think about safety at the design stage’ there is little clarity about what, in the context of the Online Safety Act, safety by design is supposed to mean. In the past its proponents have seen it as an alternative to content-focused measures, but now we have suggestions that e.g. automated content filtering is a safety by design measure. If, as has recently been suggested, the OSA should formally define it, we have to understand it first. https://www.cyberleagle.com/2026/02/safety-by-design-or-systems-for-content.html

@bjn @cstross @jim Lastly, an earlier piece on differing views of safety by design, written before the responses to the Ofcom Additional Measures consultation discussed in the more recent piece. https://www.cyberleagle.com/2024/12/safe-speech-by-design.html