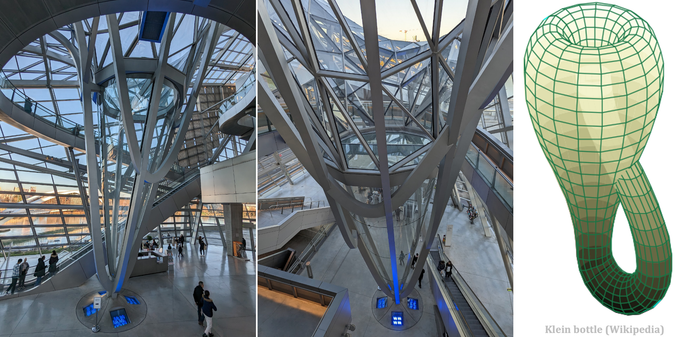

This is the Confluence Museum in Lyon, France, built in 2014, which has an interesting geometric shape. The Austrian architects from Coop Himmelblau missed an occasion to integrate a Klein Bottle ...

Principal Scientist at @NaverLabsEurope, Lead of "Spatial AI" team. AI for Robotics, Computer Vision, Machine Learning. Austrian living in France.

https://chriswolfvision.github.io/www