https://wordpress.org/news/2026/05/wp23/

This is a wild thing to write after you did the following actions:

- Demanding that WP Engine and/or Silver Lake pay 8% of their revenues back to the project as part of WordPress’ “Five for the Future” initiative, a request that many viewed as extortionate.

- Prohibiting WP Engine employees from updating WP Engine-owned plugins on the WordPress plugin repository.

- Adding a checkbox to the WordPress.org login screen that made users declare that they were not “affiliated with WP Engine in any way”.

- Kicking WP Engine employees off WordCamp organizing teams and barring WP Engine from sponsoring WordCamps.

- Wresting control of WP Engine-owned plugins in the WordPress plugin repository.

- Kicking WP Engine employees off of the WordPress community Slack team.

- Blocked any website hosted on WP Engine from the automatic and easily accessed core, them, and plugin update process that sites on any other hosting provider enjoy.

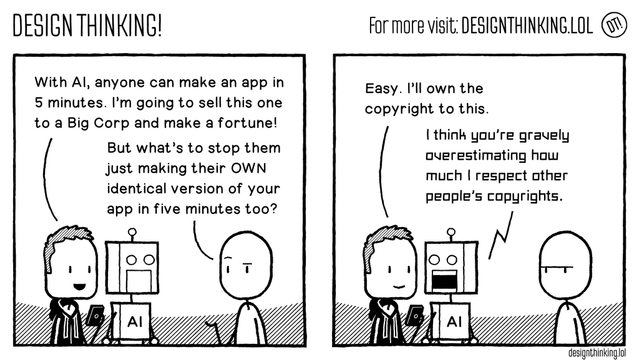

This is just classic narcissist behavior. You attempted to exploit the relationship you had with Wordpress and the community with a wild sense of entitlement. You became obsessively competitive with a company that had peacefully coexisted alongside yours for years and years. Now that you have lost interest in the initial competitive action (because it was a stupid idea), you are attempting to blame shift and create a false narrative that you are the victim here because your actions have consequences.

The correct answer is to resign. Do it with a community-involved transition plan, make it very celebratory, try to bring the good vibes back. You are out of control and clearly lack a corporate structure that has anyone capable of reigning you in. You made an unhinged attempt at shaking down another company and, like many narcissists, you cannot process that just because you've lost interest in the initial actions that kicked off this chain of events, that isn't sufficient.